Oxford University released a new study on vaccine effectiveness this week based on the ONS Infection Survey. Its headline finding was that, for the period dominated by the Delta variant, the AstraZeneca jab declined from 97% vaccine effectiveness against symptomatic infection to 71% and Pfizer’s declined from 97% to 84%. The researchers note that vaccine effectiveness (VE) appears to wane with time, putting this at 7% per month in the case of AstraZeneca and 22% per month in the case of Pfizer.

One odd thing about these results is that the 97% initial VE for AstraZeneca is very high compared to other estimates, including the vaccine trial which found it to be just 70.4%.

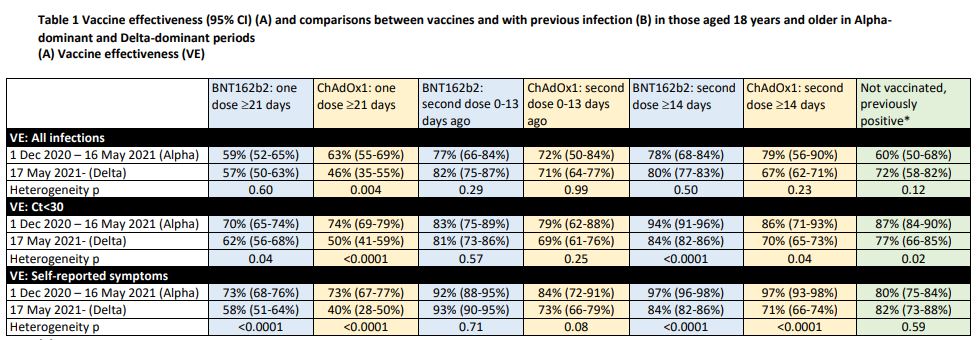

Here are their vaccine effectiveness results in full.

A second oddity is that for the all-infection (positive test) findings, the researchers found Pfizer VE was just 78% in the Alpha period, well below the usual figure – such as that from a major Israeli study, which put it at 92%. But then the researchers found it rose to 80% in the Delta period. A third oddity is that AstraZeneca VE was 71% in the 13 days after the second dose, up from 46% after the first dose even though that’s before the second dose is supposed to kick in. Yet once it is supposed to kick in, after 14 days, VE drops to 67%. These are strange results indeed.

Another perplexing aspect is that the VE estimates against Delta in this study, while (mostly) lower than against Alpha, are much higher than those indicated by recent data from Israel and the U.K., which have included 39% and 17%.

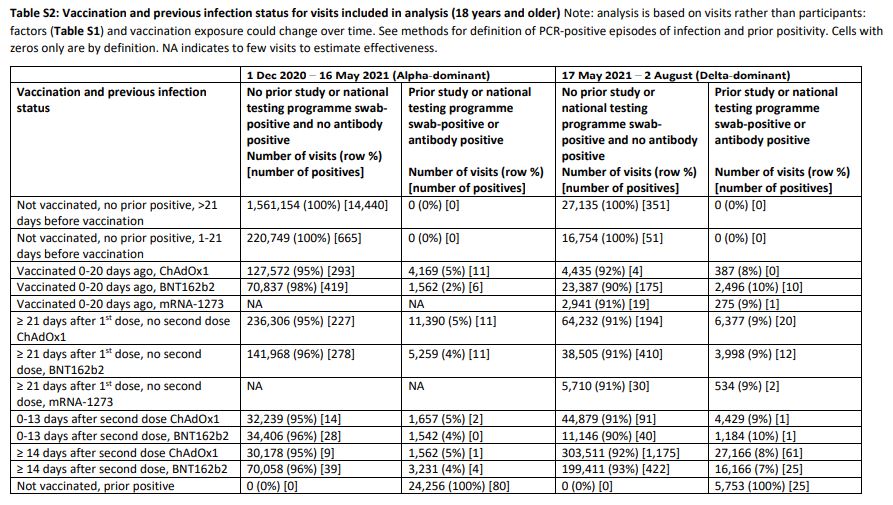

These various oddities piqued my suspicions, so I had a look at the raw data (shown below).

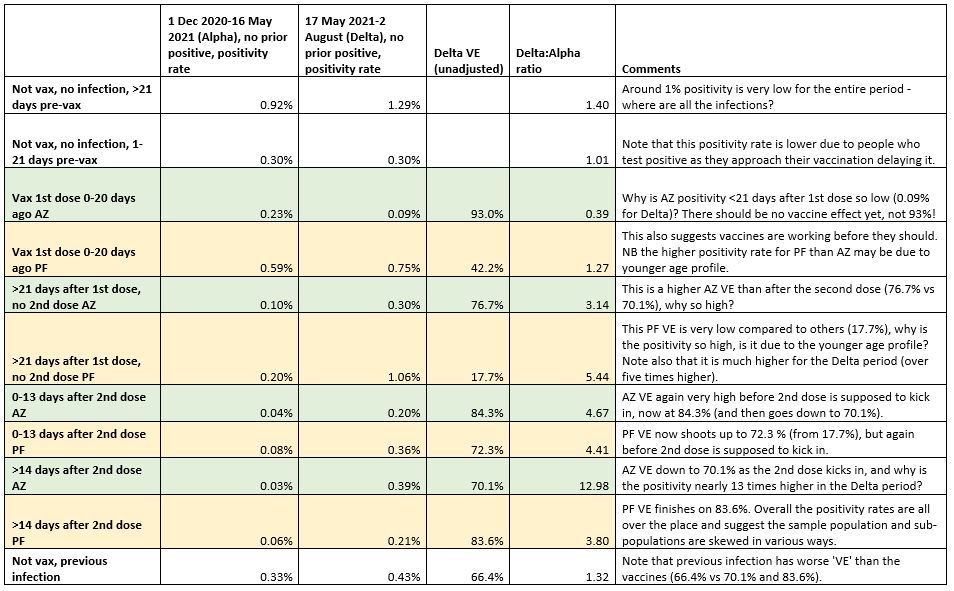

I converted some of these figures into positivity rates to get a clearer idea of where the VE results were coming from. I also added an unadjusted calculation of VE, given as 100% minus (vaccinated positivity/unvaccinated positivity). This assumes those tested are representative of the population, but since the purpose of the ONS Infection Survey is to survey a representative population sample this assumption didn’t seem unreasonable as a first pass.

As can be seen (I have included comments in the table) these positivity rates and VE scores are all over the place. AstraZeneca VE hits 93% before the first dose is supposed to kick in, then drops to 76.7% as it kicks in, then rises to 84.3% before the second dose kicks in, and drops again to 70.1% as it kicks in. For Pfizer, we find the vaccine ‘working’ again before the first dose is supposed to kick in, with VE of 42.2%, but dropping to 17.7% as the first dose is supposed to start working, before rising to 72.3%, but again before the second dose is supposed to kick in, then rising to 83.6% as the second dose comes on line. Surprisingly, previous infection is found to be only 66.4% effective against new infection on these figures, lower than the vaccines.

The researchers adjust these raw figures for a large number of “potential confounders” (though not calendar week or background prevalence).

The following potential confounders were adjusted for in all models as potential risk factors for acquiring SARS-CoV-2 infection: geographic area and age in years (see below), sex, ethnicity (white versus non-white as small numbers), index of multiple deprivation (percentile, calculated separately for each country in the UK), working in a care-home, having a patient-facing role in health or social care, presence of long-term health conditions, household size, multigenerational household, rural-urban classification, direct or indirect contact with a hospital or care-home, smoking status, and visit frequency.

Even so, as we have seen, their results still don’t make much sense or correspond well with other studies. One problem is that the survey doesn’t appear to be finding very many infections at all – an overall positivity rate of around 1% in the unvaccinated both over the winter and in the Delta surge is very low given that most estimates find that around 10-15% of the population was infected in each period. However, this may be because they are testing each person several times before they test positive. Another point is that just 6% of the ONS survey tests during the Delta period were in the unvaccinated (43,889 out of 742,019), which is very low as a much greater proportion of the country between May and August was unvaccinated.

The main conclusion must be that the ONS sample is not suitable for this study. It is not representative enough of the population since it throws up implausible results even after adjustments, and requires too much adjusting, which is always a process riddled with guesswork and no substitute for starting with a better sample. This leads to further questions about how suitable the ONS sample population is for estimating infection prevalence as well.

The researchers also found that viral load, and thus likely infectiousness, is no lower in vaccinated than unvaccinated people, adding to the evidence that vaccination is not something that does much to protect others, undermining the case for vaccine passports, coercion and the vaccination of children.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.