Neil Ferguson’s team at Imperial College London (ICL) has released a new paper, published in Nature, claiming that if Sweden had adopted U.K. or Danish lockdown policies its Covid mortality would have halved. Although we have reviewed many epidemiological papers on this site, and especially from this particular team, let us go unto the breach once more and see what we find. The primary author on this new paper is Swapnil Mishra.

The paper’s first sentence is this:

The U.K. and Sweden have among the worst per-capita Covid mortality in Europe.

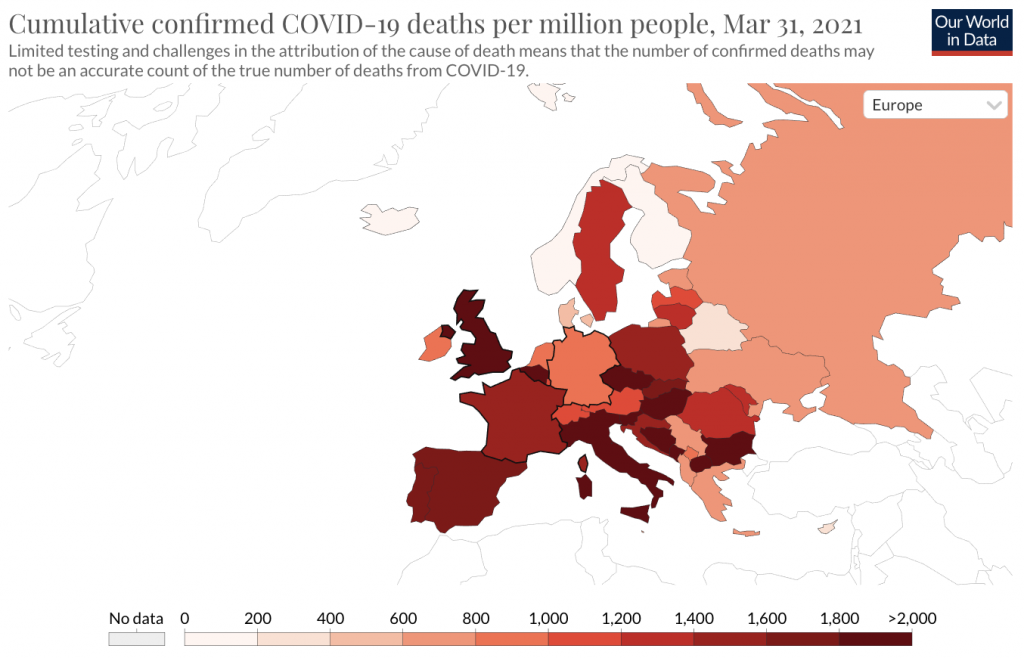

No citation is provided for this claim. The paper was submitted to Nature on March 31st, 2021. If we review a map of cumulative deaths per million on the received date then this opening statement looks very odd indeed:

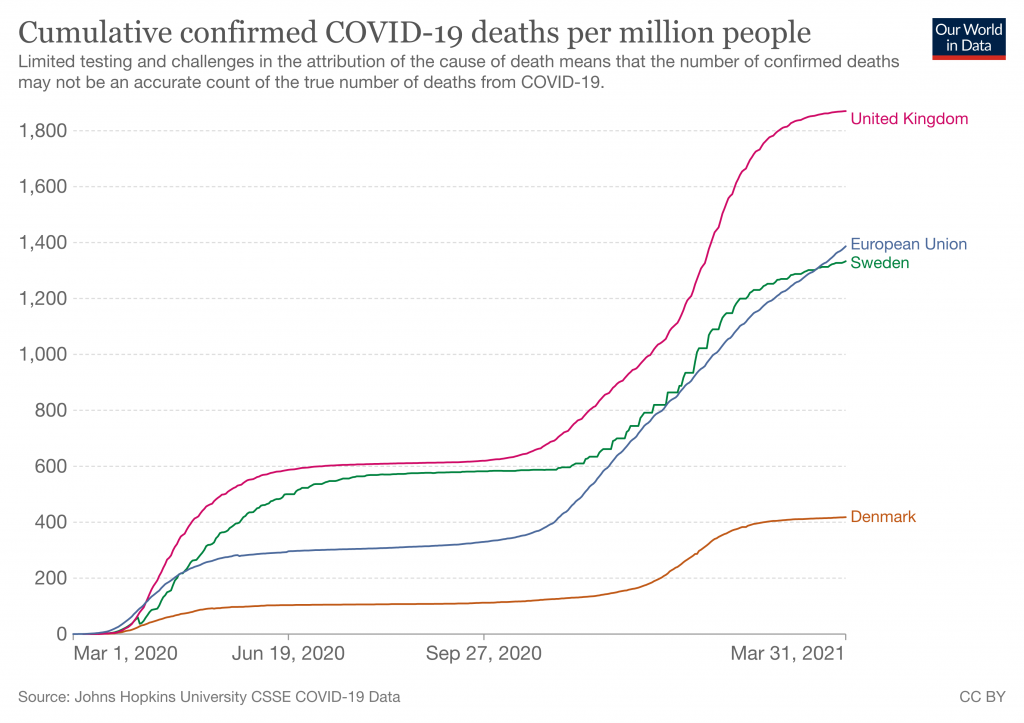

Sweden (with a cumulative total of 1,333 deaths/million) is by no means “among the worst in Europe” and indeed many European countries have higher totals. This is easier to see using a graph of cumulative results:

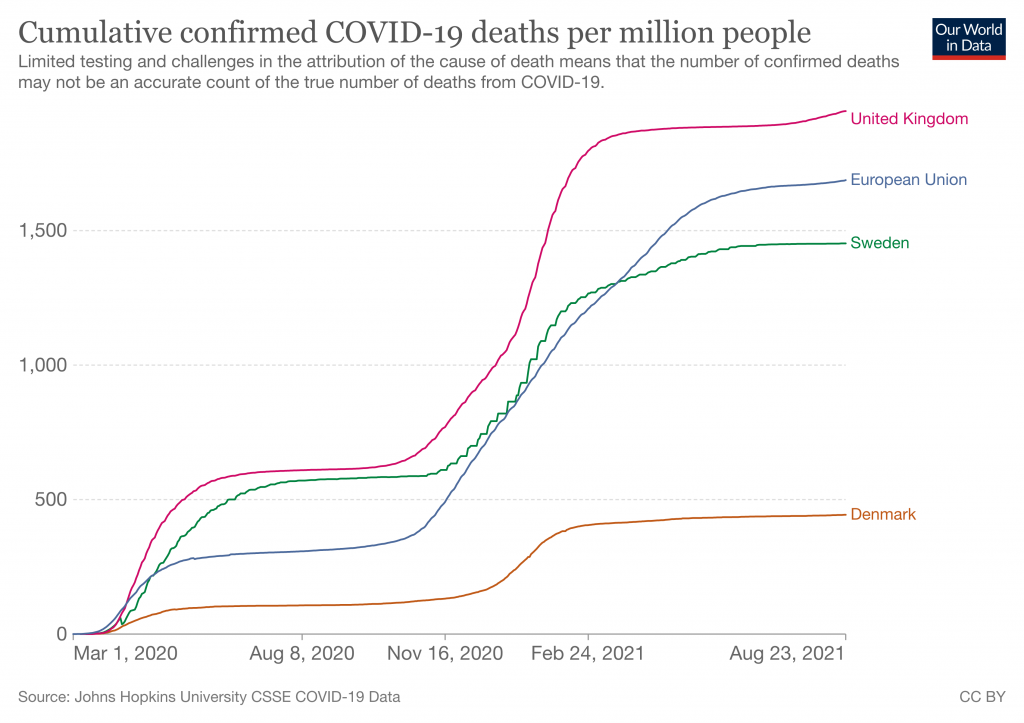

But that was in March, when the paper was submitted. We’re reviewing it in August because that’s when it was published. Over the duration of the journal’s review period this statement – already wrong at the start – became progressively more and more incorrect:

As always, we must note that these ‘death’ graphs can be heavily affected by testing levels, because Covid deaths are defined as any death within 28 days of a positive test. The U.K. tests much more than Sweden does. But putting that to one side, Sweden by now has significantly better results than the rest of the E.U. What’s going on here? A likely explanation is that although the paper was submitted in March it was actually written some time last year, probably starting around the end of the summer and finishing up in August. There then followed a strange many month gap before they submitted it, and then many more months were added by the glacial peer review process journals use. We can see evidence of this timeline in the abstract, where they say:

We use two approaches to evaluate counterfactuals which transpose the transmission profile from one country onto another, in each country’s first wave from March 13th (when stringent interventions began) until July 1st, 2020.

More evidence comes from the upload dates on the released code, which is from 10 months ago. In other words, Nature is publishing a paper about the fast-moving coronavirus situation that builds its entire case on obsolete data more than a year old, without explicitly noting that anywhere. In July 2020, Sweden and the U.K. did indeed have worse results than the rest of the E.U. However as we now know, this meant nothing and a year on the data looked very different.

Why did ICL wait so long before submitting this paper to Nature? No obvious explanation occurs. And why didn’t anyone notice that the claims were no longer true? Not for the first time, it appears nobody can actually be reading these papers adversarially before publication. Time and again we see that at major scientific journals the lights are on, but nobody’s home.

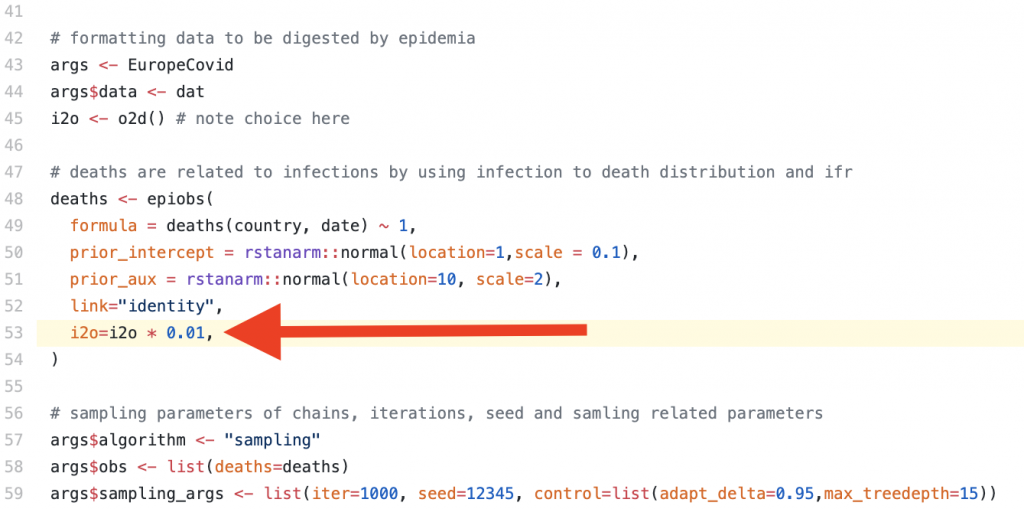

Seeing this made me wonder if they were once more engaging in a favourite trick of this team, by using Verity et al.‘s obsolete infection:fatality ratio estimates from January 2020. And indeed they are:

The idea that 1% of all SARS-CoV-2 infections would lead to death was later disputed as being ~4x too high by a meta-study of seroprevalence data published by the WHO. This newer estimate was based on far larger sample sizes, and serosurveys give an ability to detect people who recently had mild disease without getting tested or reporting it at the time. It’s thus a much more scientifically robust method of IFR estimation than Verity’s paper, which being written very early on had to rely on media reports and questionably reliable information coming out of China. As the authors discuss in the supplementary material, using a lower IFR (they try 0.5) means that the U.K.’s predicted mortality from adopting the Swedish strategy drops significantly due to the changed impact of herd immunity.

Who is responsible for this situation? Nature appears to be knowingly publishing a paper on Covid that makes claims in the present tense, but which is in reality so out of date that the very first sentence is factually false. This is not merely useless but actively damaging because non-academic readers (i.e., politicians and public health officials) will reasonably assume that claims published by scientists about Covid in August 2021 were actually written in August and have some relevance to the current situation. Nowhere is it explicitly stated at what time the analysis was believed to be accurate: it must instead be inferred from the choice of datasets and audit trails left on the source code hosting site they use.

Overall approach

Moving on. What does the model actually do?

The core concept is to try and calculate the changing infectiousness of SARS-CoV-2 for each of the U.K., Sweden and Denmark over time, then ‘graft’ the generated timeseries for R(t) onto the other countries. As is typical for this team, the authors assume that changes in Rt are driven only by government interventions or voluntary behavioural changes, and thus by transposing Rt onto other countries they claim to be calculating what would have happened if different countries had adopted each other’s policies. They try two different approaches to this, an ‘absolute’ and a ‘relative’ approach.

There are many problems with this methodology.

The study of only the U.K., Sweden and Denmark has no scientific basis. Why Denmark and not, say, France? This selection is very obviously politically motivated. In fact, the entire paper is basically a policy paper designed to influence politicians, not answer any question about viruses that a real scientist might ask.

With the benefit of 2021 hindsight we can argue persuasively that lockdowns had no real impact on Covid. The most recent and effective demonstration of that was the U.K.’s ‘Freedom Day’ in which cases dropped off a cliff just days after restrictions were relaxed, in defiance of the warnings of “international health leaders” that this would be “foolish” and “unethical”, a “threat to the world”, etc. There have been many other such events and analyses of global datasets show no correlation between lockdowns and health outcomes. Thus their underlying assumption that social policy is responsible for different outcomes is wrong. In fact, although they are well aware that there must be many factors influencing mortality outcomes, they explicitly disregard all of them: “While we cannot fully encompass the myriad of differences between each country, our analysis is nonetheless informative on best practice for control of future waves of the Covid pandemic.”

Despite asserting that their analysis can tell lawmakers what to do in future epidemics, they later admit that “our counterfactual scenarios should be interpreted as a exchange of both population behaviour and government policy between donor and recipient countries“. This is important for them to admit because they tried to explain why Covid has varying infectiousness in different countries by reference to “cultural differences“, which they boil down to a single statistic about the proportion of single person households in each country. But this is illogical nonsense. Even if we (wrongly) assume that all differences in observed outcomes are to do with policy and culture, governments cannot magically make the U.K. population become Danish or vice-versa. Any analysis that assumes this and claims to be “informative on best practice” is wrong and should have been dropped during peer review.

The paper has another difficulty with being “informative“. Although the authors propose two different approaches to try and answer the same underlying question, the two approaches give totally different answers. For example: “If Denmark followed U.K. policies, our relative approach estimates that mortality would not have been markedly different, although our absolute approach implies that mortality would have been more than twice that observed.” Their calculations aren’t even consistent with each other, yet the paper provides no specific recommendation on which approach is supposed to yield the best answer.

Other problems include an inability to actually calculate Rt from death data (“the high variance of this distribution leads to high uncertainty in Rt estimates“), even though their entire analysis is based on the presumed integrity of that calculation, and an implausibly high sensitivity to the exact starting date of policy changes (“a three-day difference in the introduction of measures can lead to twofold differences in mortality“). The strength of this connection in their model is absurd and would appear to be strongly motivated by ICL’s attempted rewriting of history to one of: “If only the Government had listened to us sooner everything would have been far better.”

Conclusion

Given the history of this department, it’s no surprise that ICL is still churning out delusional and misleading epidemiology papers. They will continue doing so for as long as they’re funded. Analysing each and every one is a futile effort due to the sheer scale at which academia operates (e.g. this paper alone has 19 authors). But we can nonetheless learn some more about bad science by reading them. This paper shows all the usual hallmarks of an academic sector that’s gone off the rails:

- A grotesque level of data cherry picking.

- A publishing process so slow that the claims are entirely wrong on the date of publication, and wrong from literally the first sentence.

- A delusional belief that their work is “informative” to policy makers, despite implicitly arguing that entire societies can be transplanted from one country to another.

Who is ultimately responsible for stopping this? It must be the funders, who for this paper include:

- The National Institute for Health Research

- The Bill and Melinda Gates Foundation

- The U.K. Medical Research Council

- Community Jameel (a Saudi family foundation)

- Microsoft, who donated free compute time on Azure

- And finally, universities and other institutions who subscribe to Nature despite its history of publishing misleading papers

The theme here is that none of these organisations is paying close attention to what’s actually being written, apparently including the journals and peer reviewers. For funders, giving away money is not the means but the end. Until research is funded by people who actually care about the utility of the results our society will continue to be flooded with highly evolved scientism, of which the output of the ICL Epidemiology Department is a textbook example.

Mike Hearn is a former Google software engineer. You can read his blog here.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.