Back in October, when the critics rounded on the UKHSA for publishing vaccine data that didn’t fit the narrative, front and centre of their complaints was the claim that they were using poor estimates of the size of the unvaccinated population, and thus underestimating the infection rate in the unvaccinated. Cambridge’s Professor David Speigelhalter didn’t hold back, writing on Twitter that it was “completely unacceptable” for the agency to “put out absurd statistics showing case-rates higher in vaxxed than non-vaxxed” when it is “just an artefact of using hopelessly biased NIMS population estimates”.

To the UKHSA’s credit, while it conceded other points, it never gave in on this one, sticking to its view that the National Immunisation Management System (NIMS) was the “gold standard” for these estimates. It pointed out that ONS population estimates have problems of their own, not least that for some age groups the ONS supposes there to be fewer people in the population than the Government counts as being vaccinated.

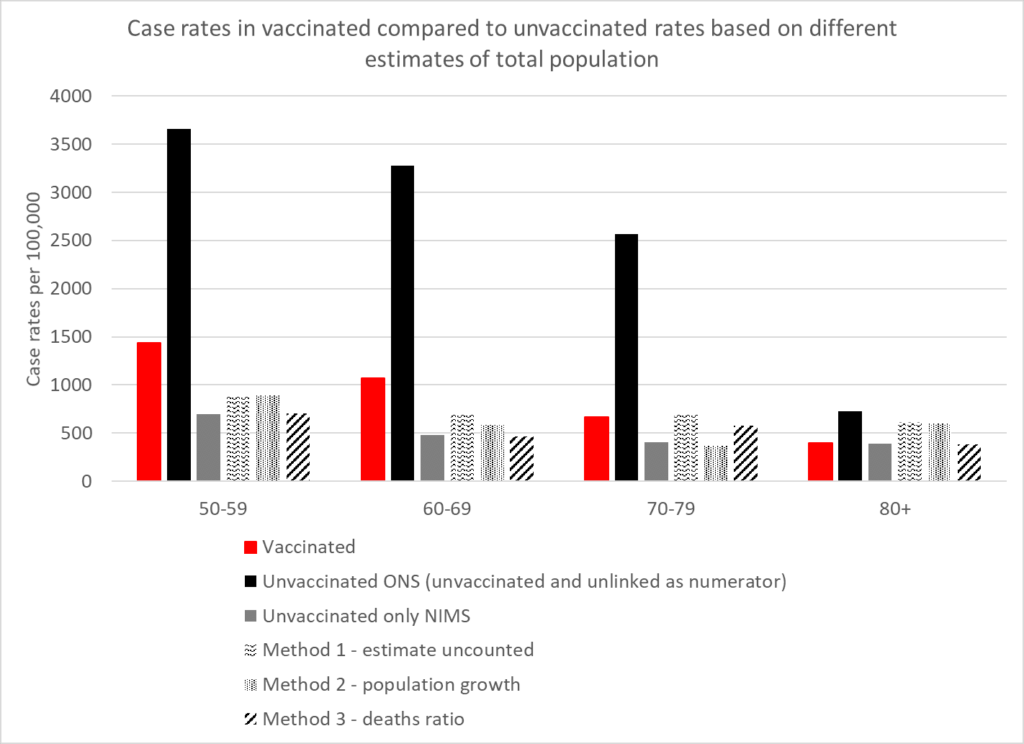

How can we know which estimates are more accurate? A group of experts has applied analytical techniques in order to estimate the size of the unvaccinated population independently of ONS and NIMS figures. Using three different methods, experts from HART found that estimates from all three methods were in broad agreement with the NIMS estimates, whereas the ONS estimate was a much lower outlier.

The first method involves recognising that people not within the NHS database system still catch Covid and still get tested. Assuming these people have the same infection rates per 100,000 people as the unvaccinated, you can calculate how many people there are outside of the database system and add these to the NIMS totals.

The second method involves looking at the rate of growth of people with an NHS number, which has been remarkably steady at around 2.9% per year. If you assume that people who are not yet registered in the NHS will sometimes become sick enough to seek healthcare, and thus a record will be created for them, applying this growth rate to the 2011 ONS population estimates give another figure for the total population.

The third method involves assuming that, in low-Covid weeks, deaths within an age bracket should occur at a similar rate in vaccinated and unvaccinated, allowing the size of the total population to be inferred from the percentage of deaths in the unvaccinated.

The results in terms of reported infection rates according to the five different estimates are depicted in the chart above. They show that the ONS is a clear outlier, its estimates sitting far too low, and NIMS is likely to be much more accurate. The ONS puts the unvaccinated population at around 4.59 million whereas NIMS puts it at 9.92 million, a difference of 5.33 million. That’s a lot of people not to be included in estimates, and suggests, among other things, that the ONS has not adequately estimated the magnitude of illegal immigration into the country.

As well as vindicating the UKHSA in its decision to stick with NIMS over ONS, HART’s analysis also indicates that, contrary to the assertions of Prof Spiegelhalter, the UKHSA data showing infection rates higher in the vaccinated compared to the unvaccinated is not a mere artefact of using the wrong population estimates. There may be other biases in it, but this is not one of them.

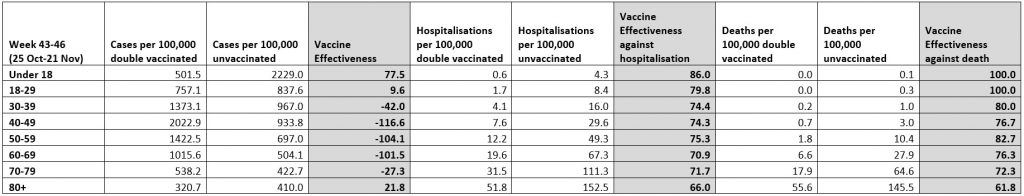

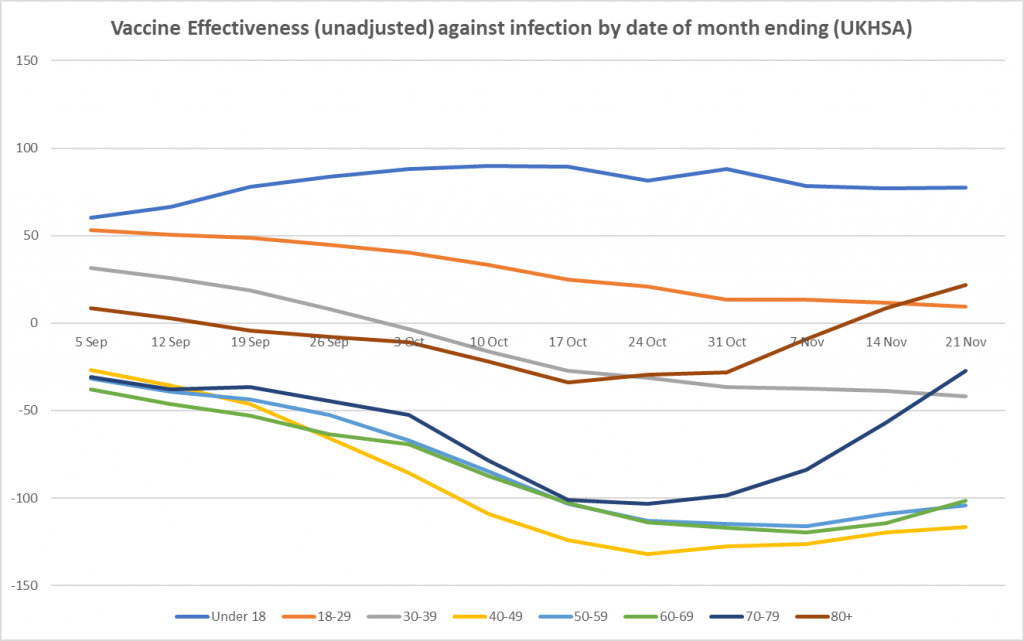

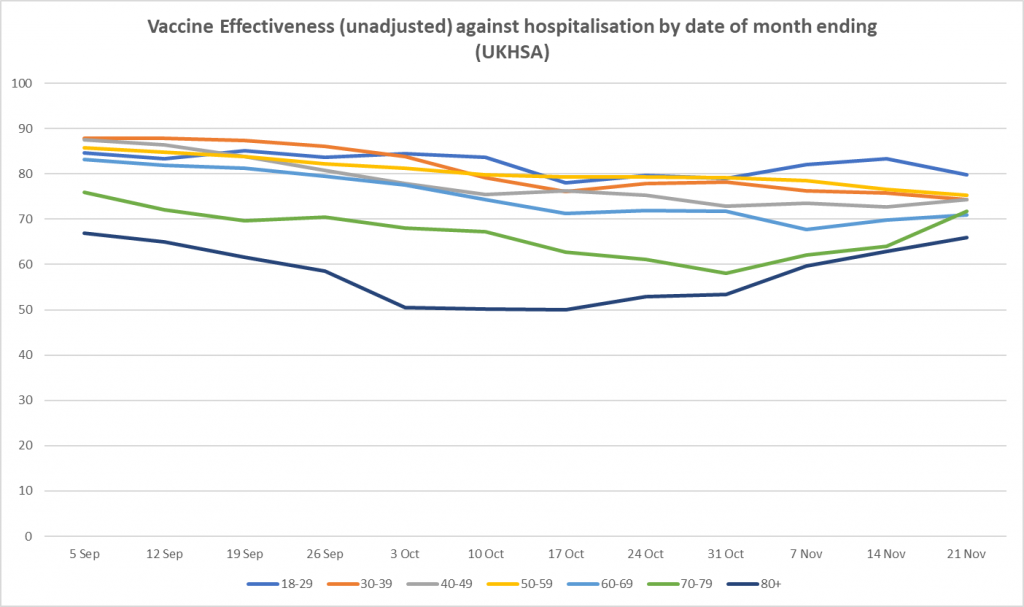

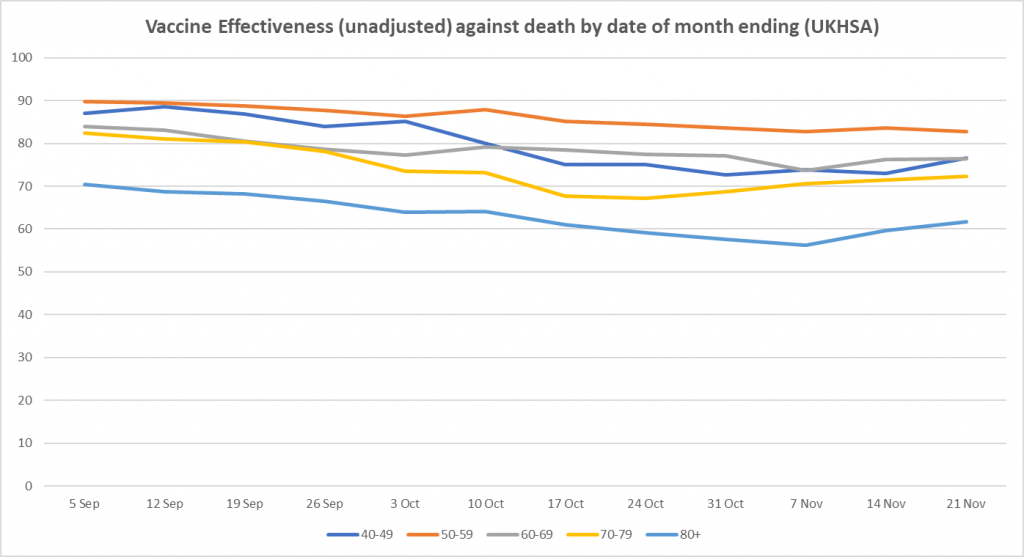

Here is the weekly update on unadjusted vaccine effectiveness based on the raw data in the UKHSA Vaccine Surveillance report. The unadjusted vaccine effectiveness estimates against infection have remained low in all adult age brackets this week, particularly in those aged 40-70, though there is little sign of further decline; in the older age groups (over 40), the recent vaccine effectiveness revival continues, possibly as a result of the third doses. There is also a sign of a rise in vaccine effectiveness against hospitalisation in the over-70s.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.