The Prime Minister may have acknowledged reality and stated that being double vaccinated “doesn’t protect you against catching the disease, and it doesn’t protect you against passing it on” but others appear to remain in denial.

On Sunday I asked whether now that the PM had let the cat out of the bag the media would start reporting properly on the UKHSA data showing higher infection rates in the vaccinated than the unvaccinated. It appears the answer is no, at least if the Times‘s Tom Whipple is any indication.

In a typically mean-spirited piece – in which anyone who doesn’t agree with his favoured scientist of the hour is smeared as a conspiracy theorist and purveyor of misinformation – Whipple quotes Cambridge statistician Professor David Spiegelhalter, who heaps opprobrium on the U.K. Health Security Agency (the successor to PHE) for daring to publish data that contradicts the official vaccine narrative. Spiegelhalter says of the UKHSA vaccine surveillance reports:

This presentation of statistics is deeply untrustworthy and completely unacceptable… I cannot believe that UKHSA is putting out graphics showing higher infection rates in vaccinated than unvaccinated groups, when this is simply an artefact due to using clearly inappropriate estimates of the population. This has been repeatedly pointed out to them, and yet they continue to provide material for conspiracy theorists around the world.

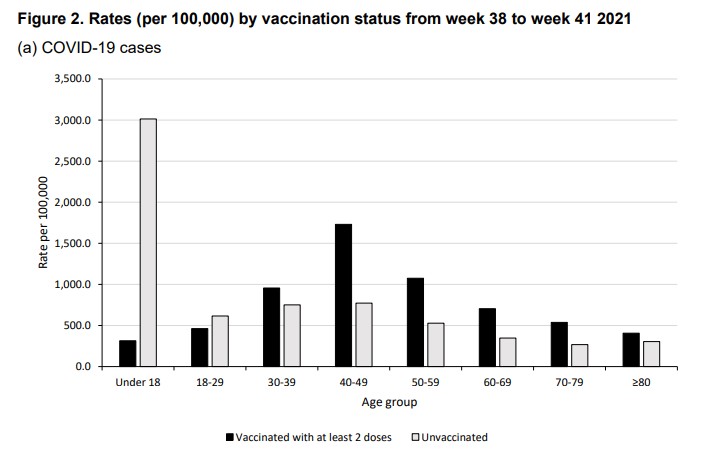

This is the graphic he is presumably referring to.

If Professor Spiegelhalter has a source for his claim that higher infection rates in the vaccinated are “simply an artefact” of erroneous population estimates then he doesn’t provide it.

Whipple says the data has been “seized upon around the world”.

The numbers have been promoted by members of HART, a U.K. group that publishes vaccine misinformation. They have also been quoted on the Joe Rogan Experience podcast in the US, which reaches 11 million people.

Appearing on that podcast, Alex Berenson, a U.S. journalist now banned from Twitter, specifically referenced the source to show it was reliable.

The UKHSA is adamant that it is doing nothing wrong. The Times quotes Dr Mary Ramsay, head of immunisation at the UKHSA, explaining: “Immunisation information systems like NIMS are the internationally recognised gold standard for measuring vaccine uptake.”

So Professor Spiegelhalter thinks that the gold standard gives “clearly inappropriate estimates of the population”, and using it is “deeply untrustworthy and completely unacceptable”? That may be his view, but the UKHSA can hardly be criticised for following the recognised standards for its work.

A more measured criticism is provided by Colin Angus, a statistician from the University of Sheffield, who the Times quotes saying that using NIMS data makes sense but the “huge uncertainty” in the population estimates should be clearer.

Whipple, however, goes further and claims that “using population data from other official sources shows, instead, shows that the protection of vaccines continues”. Yet he does not provide those sources or go into any detail about how they back up his claim.

For now, the UKHSA is defending its report (we’ll see how long it holds out for). But even so, Dr Ramsay is adamant that the report rules out using the data to estimate vaccine effectiveness: “The report clearly explains that the vaccination status of cases, inpatients and deaths should not be used to assess vaccine effectiveness and there is a high risk of misinterpreting this data because of differences in risk, behaviour and testing in the vaccinated and unvaccinated populations.”

This defence somewhat misses Professor Spiegelhalter’s criticism about population estimates. But it’s also misleading in that the report doesn’t “clearly” explain that its data “should not be used to assess vaccine effectiveness”. What it says is it is “not the most appropriate method to assess vaccine effectiveness and there is a high risk of misinterpretation”. But, as explained before, using population-based data on infection rates in vaccinated and unvaccinated is certainly a valid method of estimating unadjusted vaccine effectiveness, which is defined as the reduced infection rate in the vaccinated versus the unvaccinated. While a complete study would then adjust those raw figures for potential systemic biases (with varying degrees of success), we shouldn’t necessarily expect those adjustments to be large or change the picture radically. Indeed, when a population-based study from California (which showed vaccine effectiveness against infection declining fast), carried out these adjustments the figures barely changed at all.

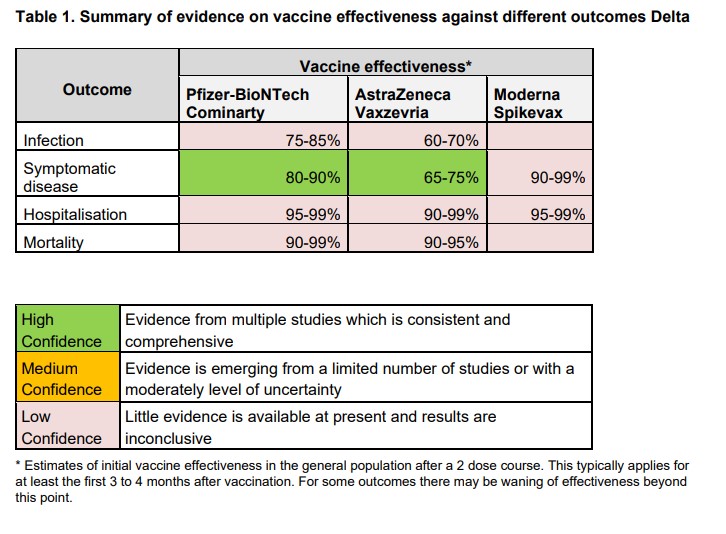

The UKHSA report adds: “Vaccine effectiveness has been formally estimated from a number of different sources and is described earlier in this report.” In fact, though, most of those estimates are reported as low confidence (see below), which means: “Little evidence is available at present and results are inconclusive.” While it claims high confidence for its estimates against symptomatic disease, a footnote explains that this only holds for 12-16 weeks: “This typically applies for at least the first three to four months after vaccination. For some outcomes there may be waning of effectiveness beyond this point.”

It is precisely this “waning of effectiveness” that the latest real-world data is giving us insight into. Rather than trying to discredit that data and those who report it by throwing around general, unquantified criticisms, scientists and academics like Professor Spiegelhalter should be redoubling efforts to provide constructive analysis to get to the bottom of what’s really going on with the vaccines. If there are issues with the population estimates then those need to be looked at, and if there are biases that need adjusting for then those need to be quantified. But do, please, get on with it – and lay off the smearing of those who raise the questions.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.