It appears the critics finally got to the U.K. Health Security Agency (UKHSA). The new Vaccine Surveillance report, released on Thursday, has been purged of the offending chart showing infection rates higher in the double-vaccinated than the unvaccinated for all over-30s and more than double the rates for those aged 40-79.

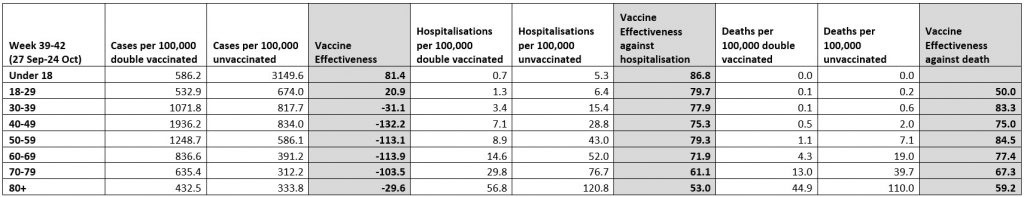

In its place we now have a table similar to the one below that I have been producing for the Daily Sceptic each week (though without the vaccine effectiveness estimates), and a whole lot more explanation and qualification.

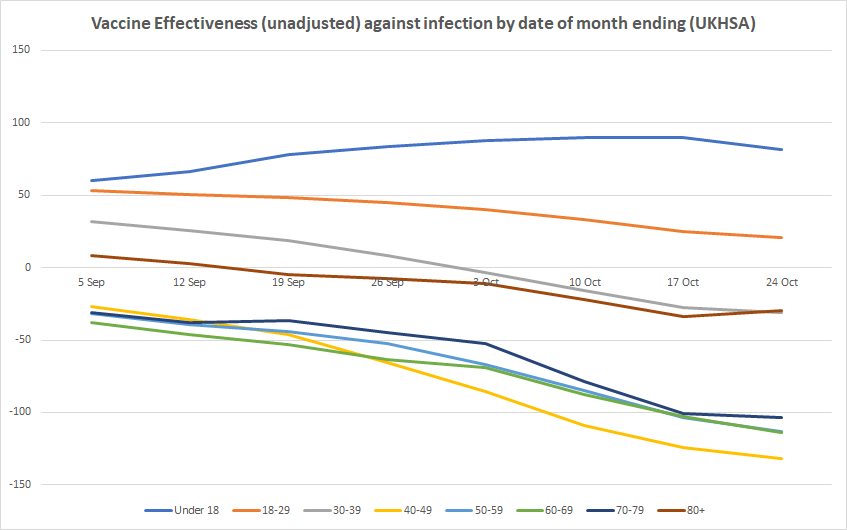

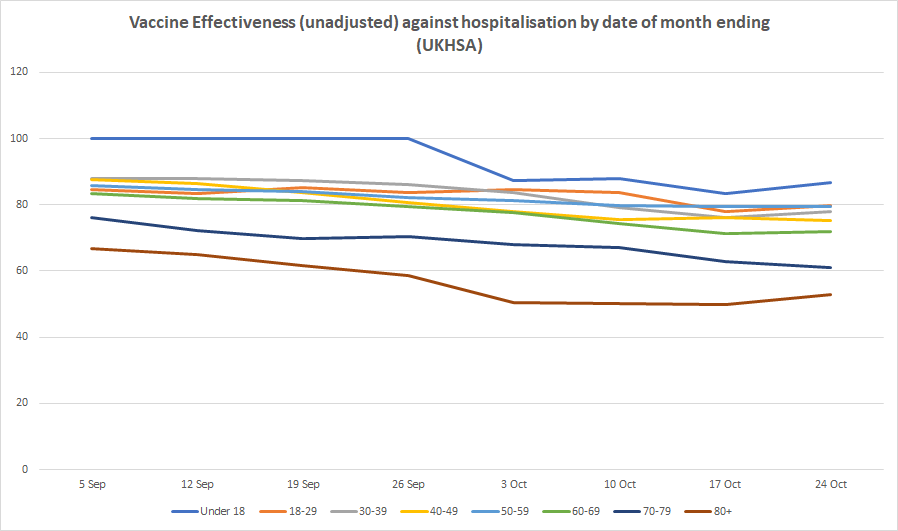

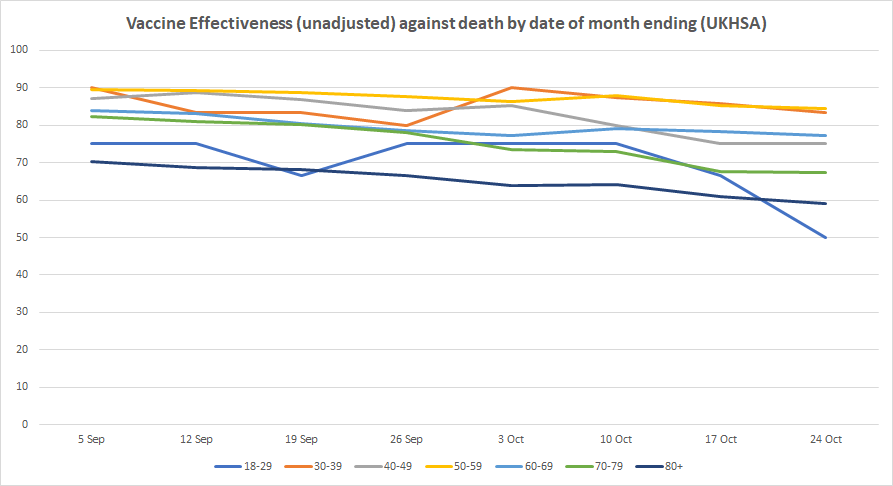

Here are our updated charts of unadjusted vaccine effectiveness over time from real-world data in England.

The figures this week continue to worsen for the vaccinated, with unadjusted vaccine effectiveness against infection hitting minus-31% for people in their 30s, minus-132% for people in their 40s, minus-113% for those in their 50s, minus-114% for those in their 60s, and minus-104% for those in their 70s. For those over 80 it rose slightly to a still abysmal minus-30%, from minus-34% last week. Vaccine effectiveness remains positive for those under 30, though for 18-29 year-olds it slipped again to just 21%. It is still highly positive for those under 18, though dropped slightly for the first time to 81%, from 90% the previous week. Vaccine effectiveness against hospitalisation and death remained largely stable this week, meaning there’s no sign yet of the sharp decline found in the recent Swedish study.

It was welcome to see the UKHSA robustly defend its use of the NIMS population data against the criticisms levelled at it by, among others, David Spiegelhalter, who called it “deeply untrustworthy and completely unacceptable”, with the higher infection rates in the vaccinated “simply an artefact due to using clearly inappropriate estimates of the population”. The report counters:

The potential sources of denominator data are either the National Immunisation Management Service (NIMS) or the Office for National Statistics (ONS) mid-year population estimates. Each source has its strengths and limitations which have been described in detail here and here.

NIMS may over-estimate denominators in some age groups, for example because people are registered with the NHS but may have moved abroad, but as it is a dynamic register, such patients, once identified by the NHS, are able to be removed from the denominator. On the other hand, ONS data uses population estimates based on the 2011 census and other sources of data. When using ONS, vaccine coverage exceeds 100% of the population in some age groups, which would in turn lead to a negative denominator when calculating the size of the unvaccinated population.

UKHSA uses NIMS throughout its COVID-19 surveillance reports including in the calculation rates of COVID-19 infection, hospitalisation and deaths by vaccination status because it is a dynamic database of named individuals, where the numerator and the denominator come from the same source and there is a record of each individual’s vaccination status. Additionally, NIMS contains key sociodemographic variables for those who are targeted for and then receive the vaccine, providing a rich and consistently coded data source for evaluation of the vaccine programme. Large scale efforts to contact people in the register will result in the identification of people who may be overcounted, thus affording opportunities to improve accuracy in a dynamic fashion that feeds immediately into vaccine uptake statistics and informs local vaccination efforts.

Much less welcome was the report’s reinforcement of the claim that its data should not be used to estimate vaccine effectiveness. Earlier in the week, Dr Mary Ramsay, Head of Immunisation at the UKHSA, had said: “The report clearly explains that the vaccination status of cases, inpatients and deaths should not be used to assess vaccine effectiveness and there is a high risk of misinterpreting this data because of differences in risk, behaviour and testing in the vaccinated and unvaccinated populations.” I had pointed out that this was false, the report did not “clearly explain” that its data “should not be used to assess vaccine effectiveness”. Rather, it said it was “not the most appropriate method to assess vaccine effectiveness and there is a high risk of misinterpretation”, which (correctly) leaves open that it can be used for this purpose provided the risks of misinterpretation are addressed.

Now, though, the text of the report aligns with Dr Ramsay’s statement. It says: “Comparing case rates among vaccinated and unvaccinated populations should not be used to estimate vaccine effectiveness against COVID-19 infection.”

It is difficult to overstate how outrageous this is. It amounts to Government attempting to redefine a basic concept of immunology, vaccine effectiveness, because it is not currently giving the ‘correct’ answer for the Government’s narrative. It is in fact a false statement. Comparing case rates among vaccinated and unvaccinated groups not only may be used to estimate vaccine effectiveness, it is the definition of vaccine effectiveness, namely the reduction in infection rates in the vaccinated compared to the unvaccinated. Of course, any biases in the data ought to be identified and, where possible, adjusted or controlled for. But that doesn’t mean population data “should not be used” to estimate unadjusted vaccine effectiveness, as though such an estimate tells us nothing useful and is wholly misleading.

The absurdity of this thinly-disguised attempt to throw a sheet over unfavourable data is shown up by the fact that the reasons the UKHSA gives for the estimates being invalid are completely different to the main points its critics are making. Critics like David Spiegelhalter and Leo Benedictus (of Full Fatuous) are primarily concerned with alleged shortcomings of the population data, arguing that ONS data should be used instead. But, as noted, the UKHSA does not accept this criticism and defends its use of NIMS population data. In a normal world, this would mean that, with the main criticism dealt with, we would go back to using the data to estimate vaccine effectiveness.

But no, for UKHSA has another, completely different reason why it deems it invalid to do so. The population data, it explains, gives only “crude rates that do not take into account underlying statistical biases in the data”:

There are likely to be systematic differences in who chooses to be tested and the Covid risk of people who are vaccinated. For example:

• people who are fully vaccinated may be more health conscious and therefore more likely to get tested for COVID-19

• people who are fully vaccinated may engage in more social interactions because of their vaccination status, and therefore may have greater exposure to circulating COVID-19 infection

• people who are unvaccinated may have had past COVID-19 infection prior to the four-week reporting period in the tables above, thereby artificially reducing the COVID-19 case rate in this population group, and making comparisons between the two groups less valid COVID-19 vaccine surveillance report – week 43These biases become more evident as more people are vaccinated and the differences between the vaccinated and unvaccinated population become systematically different in ways that are not accounted for without undertaken [sic] formal analysis of vaccine effectiveness.

This is all unquantified, and the claim at least that it is vaccinated people who are more likely to engage in social interaction is questionable, as anyone who chooses to remain unvaccinated (as opposed to having a condition that makes vaccination inadvisable) is more likely to be relaxed about catching coronavirus (not least because, as per the third bullet point, they may already have had it).

Besides, as I’ve noted before, we don’t need to guess at how large these biases might be, because we can look at the unadjusted and adjusted figures for other population-based studies, like this one in California, and see that the differences are typically very small. While there may be more bias in the England data than the California data that needs adjusting for (why doesn’t UKHSA just get on and do this?), that is no grounds for claiming that the unadjusted estimates tell us nothing of value and “should not” be made, as though we must assume any adjustments will be large.

Furthermore, it is not as though formal studies do always control for these things anyway. A new study in the Lancet from Imperial College London estimates vaccine effectiveness against transmission by looking at infection rates in household contacts who are vaccinated and unvaccinated (and finds the vaccines do very little). But the study makes no attempt to control or adjust for previous infection or behaviour differences. If Imperial College can publish a peer-reviewed study estimating vaccine effectiveness without addressing these forms of bias, why should others be prohibited from estimating unadjusted vaccine effectiveness without adjusting for such biases? Thus the concern about biases starts to appear more like a form of message control, of providing a pretext to forbid unauthorised people from making use of the data, than a genuine issue.

On one level, of course, we can just ignore the UKHSA’s false claim that a comparison of infection rates in the vaccinated and unvaccinated “should not be used” to estimate vaccine effectiveness, and estimate it anyway. But actually we can’t just ignore it. The Daily Sceptic has already been ‘fact-checked‘ by Full Fatuous over this, and such ‘fact checks’ are used by technology and media companies and even regulators to decide what they will censor or permit. This has a chilling effect on people’s willingness to report on the data.

What ought to happen now (though won’t) is the UKHSA should remove the false claim that a comparison of infection rates among vaccinated and unvaccinated populations “should not be used to estimate vaccine effectiveness” and start to do the honest thing and include estimates of unadjusted vaccine effectiveness in the report itself, just as it includes unadjusted estimates of the secondary attack rate based on raw data – if it can do one, why not the other? It should also provide adjusted estimates based on its own analysis. Indeed, back in the spring when the vaccines appeared to be highly efficacious, PHE would sometimes include its own adjusted estimates of vaccine effectiveness, even when it only had ‘low confidence‘ in the findings. Why not go back to doing that? Or are they only interested in doing this when it gives the ‘right’ answer? It’s beginning to look that way.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.