The pandemic has shone a spotlight on public health ‘experts’.

Many initially opposed lockdown, before abruptly changing their view when doing so became politically convenient. They said that masks don’t work, only to turn around and back mandates. And after assuring us the vaccines would stop transmission, they were met with overwhelming data to the contrary.

In October of last year, I wrote about two studies in which ‘experts’ and laymen were asked to forecast weekly Covid numbers. ‘Experts’ performed somewhat better in the first study, but actually performed worse in the second. A possible explanation for the divergent results is that laymen in the second study were self-selected and hence better-informed about the subject matter.

These findings don’t inspire confidence in the ‘experts’ who’ve guided us through the pandemic. After all, the most basic task of science is to predict things, so if scientists can’t predict better than laymen, that suggests their theories are wrong. And if their theories are wrong, we probably shouldn’t listen to them – especially if they’re telling us to shut down the economy.

That’s public health scientists. Are social scientists any better? According to a new study: no, they’re not. (The study is still a pre-print, so hasn’t been peer reviewed.)

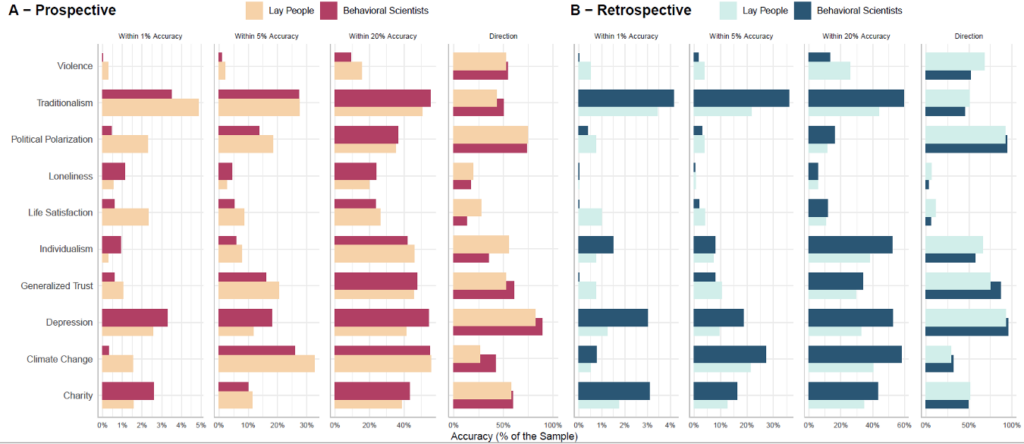

Cendri Hutcherson and colleagues asked both ‘experts’ and laymen to predict the size and direction of social change in the U.S. between April and October of 2020. There were ten different domains: prejudice, individualism, traditionalism, generalized trust, political polarisation, life satisfaction, depression, delay of gratification, birth rate, and attitudes to climate change.

Then in October of 2020, participants were asked to give retrospective estimates of the size and direction of social change over the preceding six months. Prospective and retrospective estimates were compared to objective indicators of social change, based on large representative surveys.

The researchers found that ‘experts’ and laymen were equally inaccurate, as shown in the image below.

Although the ‘experts’ did better in some domains, the laymen did better in others – so there was no overall advantage for the former group. Remarkably, this was true even when it came to retrospective estimates. (You might have assumed the ‘experts’ would at least do better here, since they might be familiar with the data.)

Interestingly, the researchers found in both groups that participants who expressed greater confidence in their estimates were less, not more, accurate. So beware of those who tell you something will or will not happen; the best forecasters are aware of the inherent uncertainty in human judgement.

It’s not just public health scientists who advise governments and other large institutions; its social scientists too. Hutcherson and colleagues’ findings should make us wary that such people have any more insight than the rest of us.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.