There follows a guest post by Daily Sceptic reader ‘Amanuensis’, as he is known in the comments section below the line. He is an ex-academic and senior Government researcher/scientist with experience in the field, who says he is “a bit cross about how science has been killed by Covid”. It was originally posted on his Substack page, but I thought it was such an excellent analysis of the UKHSA’s favoured test-negative case-control approach and its problems – especially why it seems consistently to exaggerate vaccine effectiveness – that Daily Sceptic readers should be treated to it too.

There has been much consideration in recent months about the effectiveness of the Covid vaccines, and this leads to thoughts about how vaccine effectiveness is calculated in the first place. The trouble with any attempt to calculate vaccine effectiveness is bias – that is, are the vaccinated and unvaccinated similar enough to make the calculation, or, rather, can we remove any bias to get an unbiased estimate.

As an example of bias, in the early days of the Covid vaccinations the majority of the vaccinated were old, and the unvaccinated were young – so if there was an effect of age then we’d get a biased result simply by comparing overall case rates (per 100,000) in the vaccinated versus the unvaccinated groups. In this case the bias might be resolved by splitting the analysis into different age groups, but what about other factors? Most of all, what is the bias associated with willingness to become vaccinated (maybe the vaccinated are in general more likely to be the healthy ones, say)?

Some time ago, statisticians came up with a really great way to remove rather a lot of the ‘difficult’ bias – it is called the Test Negative Case Control approach (TNCC). With this approach you don’t simply count infections, but compare the rates of infections amongst those who get tested – more specifically, you compare the ratio of positive to negative results in the vaccinated against the positive-to-negative ratio in the unvaccinated groups.

The great thing about this method is that it automatically compensates for many behavioural effects in the vaccinated compared with unvaccinated groups – so, say the unvaccinated are half as likely to go and get tested compared with the vaccinated, the TNCC should remove most of this effect. Of course, many demographic things are of interest (particularly the impact of age and gender), so you’ll usually separate out these variables, but the advantages of the TNCC method remain.

Anyway, pretty much every study on Covid vaccine effectiveness makes use of TNCC – it gives such a powerful and unbiased estimate. You can read more about it in this review article.

Oh, but what’s this I see in that paper?

A key characteristic of the test-negative design is the use of a control group with the same clinical presentation but testing negative for the pathogen of interest. This group of individuals may either be positive for alternative pathogens or negative for all pathogens (pan-negative or undiagnosed). As with any case–control study, the selection of controls should be made independently of exposure status to avoid selection bias. A situation where this assumption may be violated is the presence of viral interference, where vaccinated individuals may be more likely to be infected by alternative pathogens. (Emphasis added.)

Hmm. So it is vitally important that the vaccinated cohort don’t suffer from a different disease that might impact on testing in increased numbers compared to the unvaccinated – if they do then you’ll get a misleading estimate of vaccine effectiveness.

To explain further – the odds-ratio (which is then used to estimate vaccine effectiveness) is dependent on the calculation of (vaccinated testing positive) / (vaccinated testing negative). Thus it would be a problem if you had the same number of positive results but an increased number testing negative – such as if more people are going forwards for testing because of a different disease with similar symptoms.

What’s all that I keep hearing about the ‘worst cold ever’..?

Are we in a position where the vaccinated are getting some other viral infection causing symptoms similar to Covid, are getting tested because the symptoms are right but are then testing negative? If we are then any estimate using the TNCC approach will give a misleading result, and overestimate the vaccines’ effectiveness.

Note that this doesn’t mean that all ‘bad colds’ need to be in the vaccinated group – all that is needed is for the propensity to have a bad cold to be higher in the vaccinated group.

All we need to identify this happening is to look at the relative rates of those seeking testing, comparing vaccinated with unvaccinated (both per 100,000), and try to identify any trends in the data suggesting that the vaccinated are changing their test-seeking behaviour relative to the unvaccinated. Oh. Sorry. Those data are not available… The usual problem.

Hmm. Can we work with other data to try to identify if this particular problem is occurring?

I’d note first of all that it is no good just looking at ‘is there a lot of cold going around?’ While there does appear to be some increase in ‘coughs’, (for example, see figures 28 and 32 of the most recent Government influenza survey), you absolutely need to compare vaccinated with unvaccinated to identify the problem. Without this type of data we’re stuck.

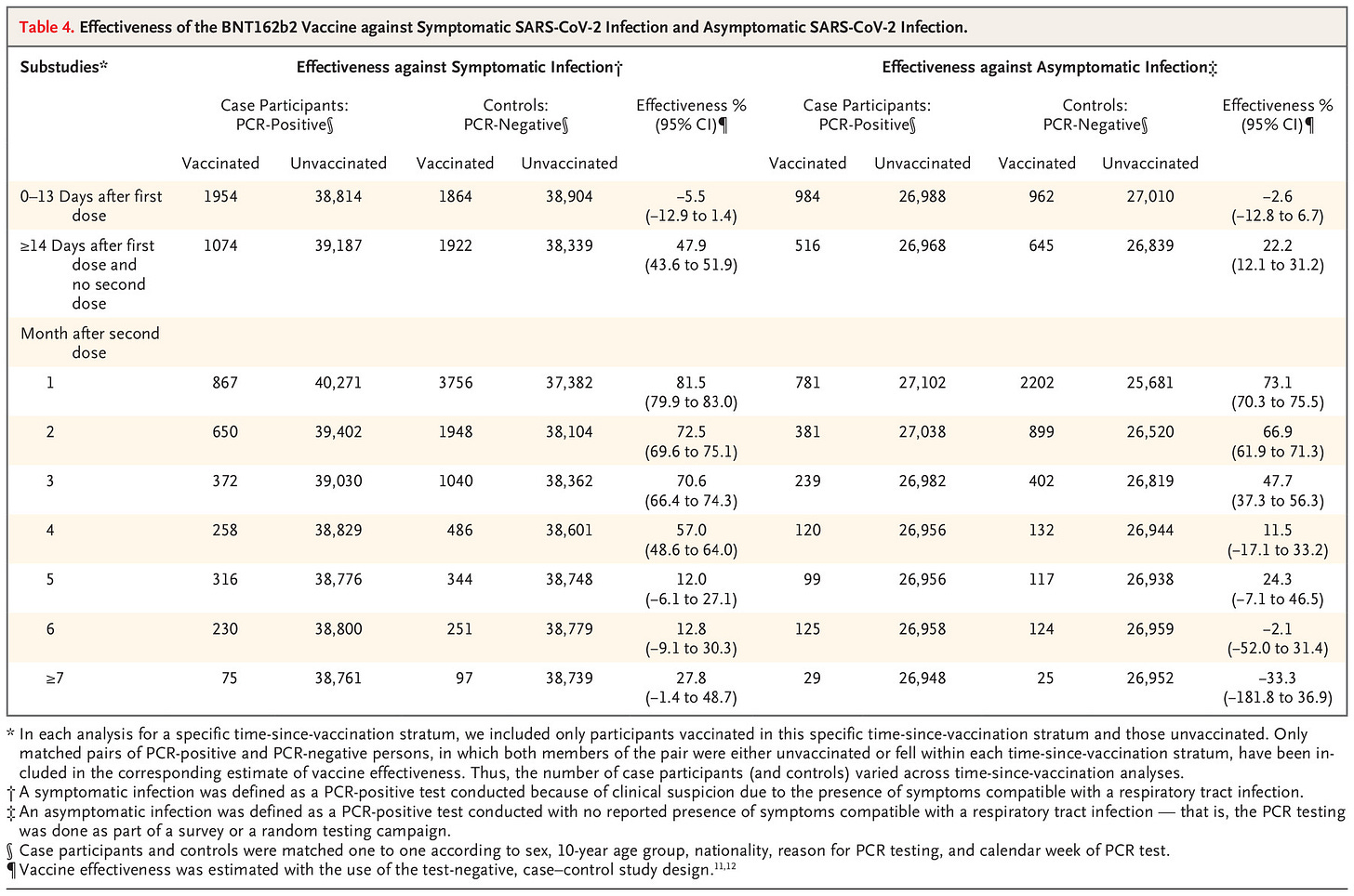

But hold on – what about comparing those getting tested without symptoms? That way the data won’t be affected by any increase in Covid-like disease. Conveniently, there’s a nice paper out fairly recently that does provide these data, from Qatar. In this paper they provide data on both symptomatic disease (which would be affected by a worst cold ever effect if it was occurring more in the vaccinated) and asymptomatic infection (which wouldn’t).

There it is – a significantly reduced (negative for six-plus months) vaccine effectiveness for asymptomatic infections. (The middle column gives the vaccine effectiveness for symptomatic disease, the rightmost column for asymptomatic disease. The rows give vaccine effectiveness by time, with the lowest row vaccine effectiveness after seven-plus months.)

This might be the information we’re after. This would definitely occur if there were more people being tested for non-Covid (but Covid-like) disease in the vaccinated cohort.

Or alternatively it might simply indicate that the vaccines have a negative effectiveness against asymptomatic disease and a positive effectiveness against symptomatic disease. Sure, that’s a terrible result and indicative of problems to come, but it doesn’t prove that the TNCC approach is giving misleading results.

Is there anything else we can do? Let’s go back to that TNCC review paper I linked to early on in this post. In the introduction to that paper is the actual reason why we use TNCC:

Case–control studies present a particularly efficient approach for monitoring VE because they tend to be faster and cheaper than cohort studies.

Okay. What about slower and more expensive approaches? Well, the ‘old-fashioned’ way of doing this is to try to compensate for every potential bias (variable) – this is more complex and requires more data (takes more time/money), but does work well – and is the way it used to be done before TNCC came along. The best way to compensate for bias is using proper cohort studies, where you recruit a number of people, split into two groups with well understood characteristics, and investigate them fully for longer periods of time. Now, this method is expensive and time consuming, but it really does give an indication of how things are going. Even better, if you have enough participants you also get indications of the level of side effects.

I suggest that this should have been done with the Covid vaccine – the initial clinical trials were time limited for understandable reasons, but that didn’t mean we should have stopped looking. In fact, it is usual to have a period of extended pharmacovigilance for newly approved medical drugs/treatments (sometimes called ‘phase IV trials’) and it is rather strange that this wasn’t done for the Covid vaccines. However, it is too late for that now – we’ve vaccinated everyone without taking the effort of selecting a set of subgroups for longer term analysis of the vaccines’ safety and effectiveness.

Luckily, though, it isn’t too late to do anything at all, because there is a way of adjusting for bias in the data using other techniques. The most common way of doing this is the Multivariable Logistic Regression (MLR) using survey data in a retrospective study.

The important thing about MLR is that it does actually work. It could be argued that TNCC is better, but it should at least give similar results – the main advantage of TNCC is mainly that it is faster and cheaper. In particular, having a TNCC that gives markedly different results to a MLR should result in questions being asked, and not simply ‘TNCC is better. The End.’

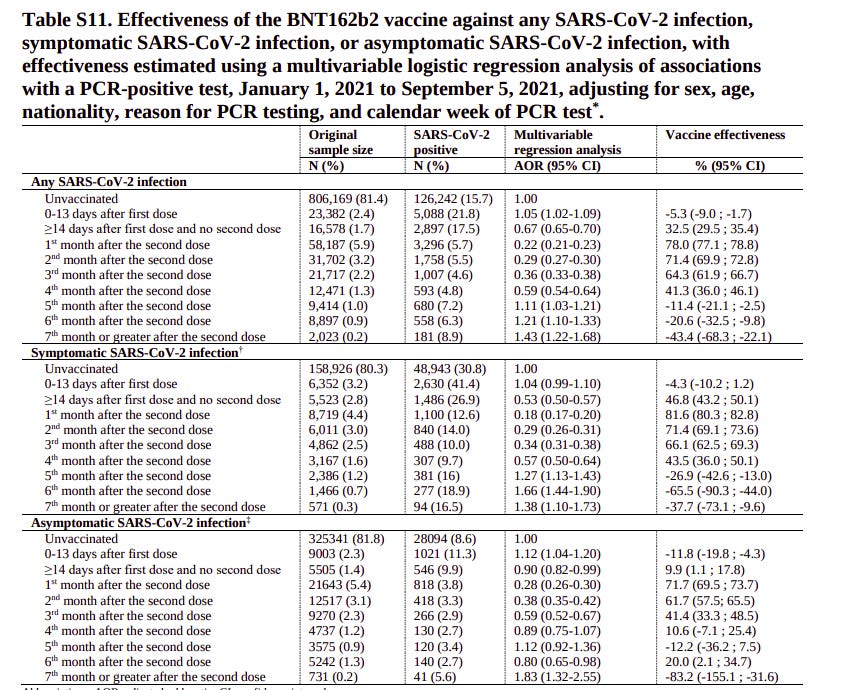

Is there one? Or, even better – has anyone done an MLR and TNCC on the same data? Amazingly, the Qatar study also includes a MLR. Sure, it is tucked away in its supplementary materials, but it is there in Table S11.

And there it is. Markedly worse vaccine performance for the MLR analysis than the TNCC analysis shown earlier. It is worth repeating that this is for the same data – all that is changed is the type of analysis used.

It is important to note that this isn’t simply ‘well, let’s just add all the numbers up’, as is being done with the UKHSA tables (albeit with age being taken out as a variable) – this is a proper analysis that takes into account age, sex, nationality, reason for testing and calendar week of test (i.e., it tries to remove Covid wave effects). Thus it counters the complaint about the simple analysis done on the UKHSA tables – that biases lead to misleading results.

What’s more, there’s a time effect. The vaccine effectiveness for the MLR is broadly similar to the TNCC estimate up to month four – that’s good, because really you want MLR to be similar to TNCC. This suggests that the residual biases in both types of analysis are low – the two very different methods give the same results, which is excellent. But differences do appear from month five; because the results were similar in earlier months this is less likely to be a simple behavioural (or similar) bias – and that leaves the question I started with – is the TNCC approach failing because of the worst cold ever effect?

I’d also note another aspect to all this. While it is true that we like to ‘do things properly’ to remove bias in important things like ‘estimate vaccine efficiency’, as a general rule it doesn’t make that much difference when you look at a population scale – the larger the numbers you look at the more likely it is that a simple approach will get you close to the truth (so long as the really important factors are considered – usually age). The problem being that population-wide estimates of vaccine effectiveness (e.g. the UKHSA tables) are just so very different from the official estimates from ONS. The MLR results given in the Qatar study suggest that the problem is with the TNCC approach that everyone is using, and that the truth is closer to the UKHSA estimate than the ONS estimate.

One more point. The risk of viral interference, that is, of the vaccine increasing the risk of infection with alternative pathogens, is just one mechanism whereby the TNCC method might give erroneous results. The basic concept of the TNCC method is that the vaccinated and unvaccinated groups are similar; as noted in the discussion of the original review paper I linked to at the start:

Simulation studies have shown that biases may arise under several circumstances, including if the study fails to adjust for calendar time, if vaccination affects the probability of non-<target disease> infections, if vaccination affects the probability of seeking care between cases and controls, if healthcare-seeking behavior differs substantially between cases and controls, and if misclassification bias is present.

And:

The test-negative design may be more appropriate for some vaccines and pathogens, but less appropriate in some scenarios for example if vaccination reduces disease severity in breakthrough infections.

Oh dear. Perhaps it isn’t simply the fault of the worst cold ever – perhaps any number of problems might be resulting in an erroneous estimate of vaccine effectiveness, some of which sound a little too familiar, such as the vaccines reducing disease severity…

Is this analysis enough to conclusively prove that the official estimates are wrong? No. But there are clear indications that something serious could be going wrong and that a much deeper analysis with more data, and perhaps a more appropriate method, is required. Until that is done I’d suggest that any estimates of Covid vaccine effectiveness using the TNCC method should be treated with caution.

Profanity and abuse will be removed and may lead to a permanent ban.