Ocean acidification refers to the decrease in the pH of the world’s oceans owing to the absorption of carbon dioxide from the atmosphere. One of its alleged effects is marked changes in the behaviour of fish that inhabit coral reefs.

For example, one 2010 study published in Proceedings of the National Academy of Sciences found that exposing larval fish to elevated CO2 disrupted their olfactory systems, leading them to become attracted to the smell of their predators. As a result, they experienced a 5–9 fold increase in mortality from predation.

Such studies have generated substantial media coverage, including headlines such as ‘Increasingly acidic oceans are causing fish to behave badly’ and ‘Losing Nemo – acid oceans prevent baby clownfish from finding home’. And they’ve even been presented at the White House.

Yet according to a recent meta-analysis, effect sizes in this literature have declined dramatically over time – suggesting that early studies (like the one mentioned above) overstated the impact of ocean acidification on fish behaviour.

Jeff Clements and colleagues reviewed 91 studies published between 2009 and 2019, and obtained an ‘effect size’ from each one. Here, the effect size was a measure of the difference in fish behaviour between the control group, and the treatment group that had been exposed to lower pH.

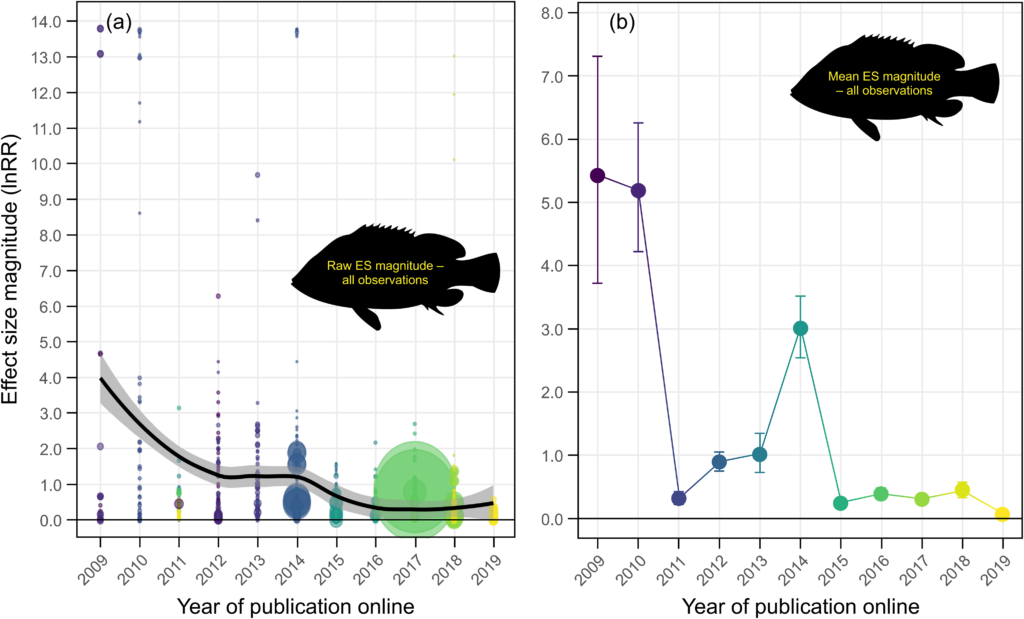

The authors main results are shown in the chart below. The left-hand panel shows all the effect sizes (91 in total), while the right-hand panel shows the average value for each year.

As you can see, studies published in 2009 and 2010 reported much higher effect sizes than those published in subsequent years – especially the last five years. Indeed, averages for the last five years are close to zero.

One plausible explanation for this ‘decline effect’ is the presence of methodological biases in early studies, which were then corrected by later, more rigorous studies. In particular, studies with small sample sizes are more prone to false positives, and are easier to ‘p-hack’.

p-hacking is where researchers do things like exclude data, change outcome variables or run different tests until they detect a significant ‘p-value’. The p-value is a way of quantifying one’s confidence that a scientific result is real, and not due to chance.

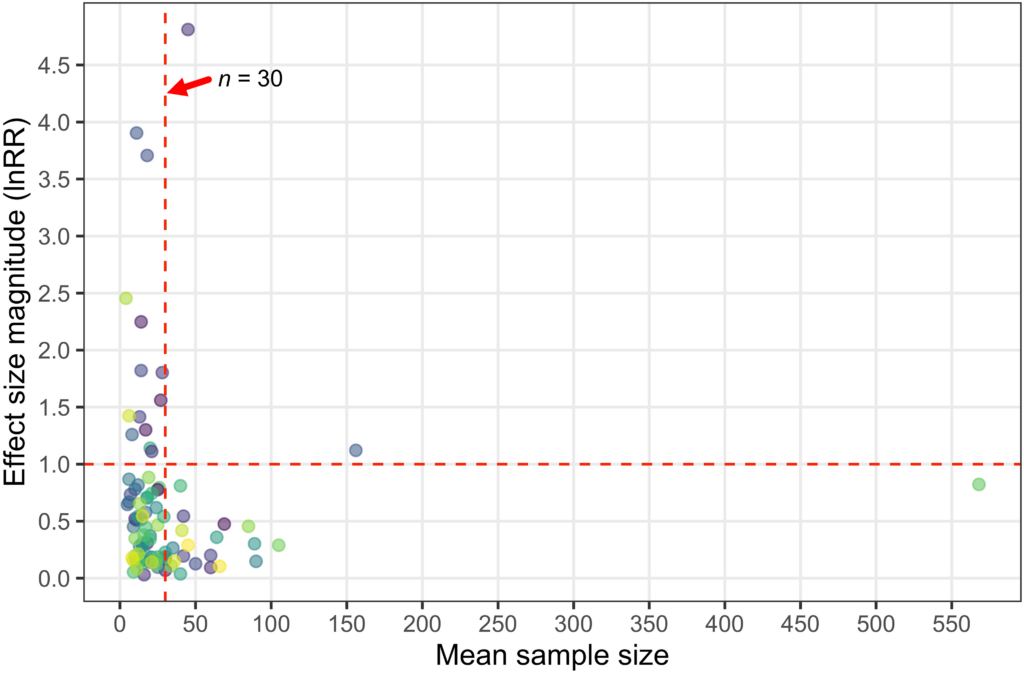

Consistent with this explanation, Clements and colleagues found that all the studies from 2009 and 2010, which had reported very large effect sizes, were based on small samples – in almost every case, less than 30 fish.

By contrast, most of the studies with sample sizes of at least 50 fish were clustered near the bottom of the effect size scale. One study with a very large sample size yielded a moderate effect size.

As the authors note, the decline effect has been observed in various research areas. It most likely stems from the fact that novel studies yielding too-high effect sizes are more likely to prompt replication attempts than those yielding null effect sizes. Hence most new research areas begin with large effect sizes.

However, there’s another explanation for the decline effect observed in Clements and colleagues’ meta-analysis: research misconduct. A university investigation recently found that Danielle Dixson (the researcher who presented her findings at the White House) has committed both fabrication and falsification in her work on fish behaviour.

Dixson was a prolific researcher, which means that many – perhaps all – of the large effect sizes from earlier years may have simply been made up. Indeed, when Clements and colleagues excluded studies published by her or her colleagues from their meta-analysis, the decline effect was no longer apparent.

As to why it took so long for Dixson’s misconduct to be uncovered, one might speculate that other scientists concerned about climate change wanted to believe her findings. In any case, the effects of ocean acidification on fish behaviour are much smaller than previously claimed, and may in fact be close to zero.

Profanity and abuse will be removed and may lead to a permanent ban.