About one half of all land surface temperature measurements used to show global warming and promote the command-and-control Net Zero agenda are taken near or adjacent to airport runways. This amazing fact from research by Professor Ross McKitrick casts further serious doubt on the validity of three major global temperature datasets, including the one compiled by the Met Office, which continue to show higher global temperatures compared with other reliable measurements made by satellites and meteorological balloons.

Airports are arguably uniquely unsuitable for providing an insight into global temperatures. Many of them are major industrial complexes spread over miles of heat-radiating tarmac and concrete, containing industrial buildings and subject to constant super-heated jet exhausts measuring hundreds of degrees centigrade.

There are three major global surface datasets. HadCRUT is compiled by the University of East Anglia Climate Research Unit in collaboration with the Met Office, NASA runs the Goddard Institute for Space Studies GISS record, while NOAA is compiled by the U.S. national weather service. All three global averages depend on the same underlying land data from the Global Historical Climatology Network (GHCN). As I have noted on numerous occasions, all three datasets have made significant adjustments, which has had the effect of increasing recent warming and cooling the historical record.

As the Daily Sceptic reported on Monday, Emeritus Professors William Happer and Richard Lindzen recently told the U.S. Government that over the last several decades, “NASA and NOAA have been fabricating temperature data to argue that rising CO2 levels have led to the hottest year on record”. These false and manipulated data were said to be an “egregious violation of scientific method”.

In the U.K., the surface datasets are used to weaponise the weather in the political interests of Net Zero. A few days of hot weather would in the past have attracted ‘Phew What a Scorcher’ headlines, but now these are written along the lines, ‘Life threatening heatwave in tinderbox Britain!’. The Met Office regularly proclaims the temperature at Heathrow, seemingly desperate to promote the highest measurement it can find. Meanwhile the BBC fills its climate page with stories like: “Heatwave: Can I refuse to work?”

The preponderance of airport data in the global surface temperature record has been known for a number of years, although little discussion about the matter can be found in mainstream media or academic literature. In a paper published in 2010, Professor of Economics Ross McKitrick from the University of Guelph in Ontario noted that the number of weather stations providing data to GHCN plunged in 1990 and again in 2005. This had the effect of increasing the contribution from airports to around 50%. McKitrick noted that the cuts reduced the average latitude of source data and removed relatively more cooler, high altitude monitoring sites. GHCN is said to apply correcting adjustments, but after 1990 their magnitude “gets implausibly large”.

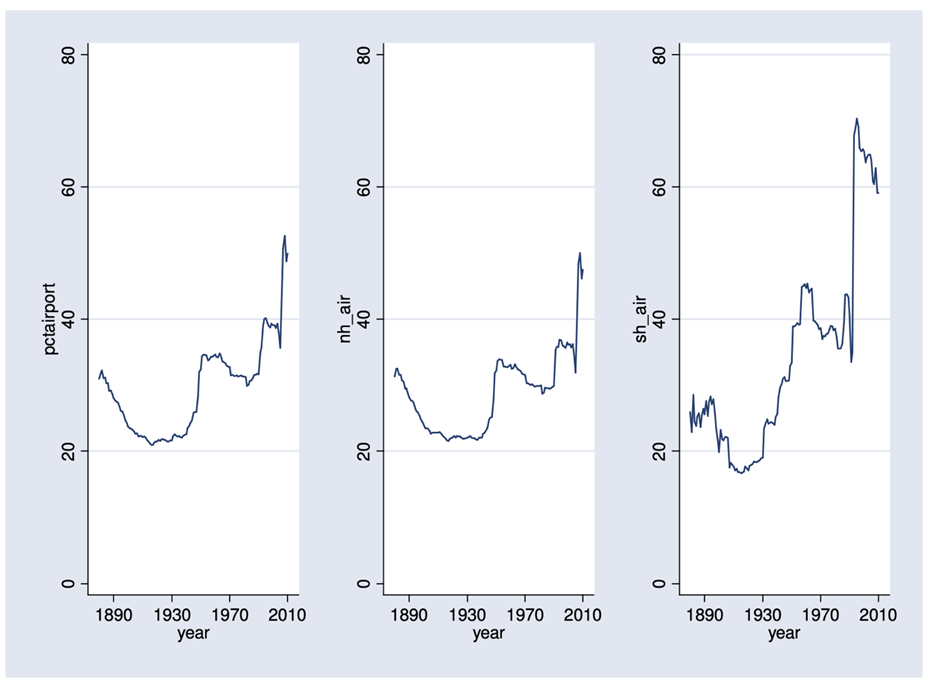

The above graph is given by Professor McKitrick to show the percentage of data supplied by airport sites to GHCN from 1890 to 2010. The graph on the left is the global percentage with the northern hemisphere in the middle and the southern on the right. The data going back to 1890 presumably refer to sites that became airports. For instance, Chicago’s O’Hare Airport was an orchard field with a measuring thermometer in the late 1800s and retains the ORD code to this day. The large jump after 1990 is clearly visible in all three graphs.

In 2017, three climate writers Drs. James Wallace, Joseph D’Aleo and Craig Idso, wrote a paper that examined the validity of the NOAA, NASA and HadCRUT data. The conclusions reached were agreed by a number of academics including Dr. Richard Keen, Instructor Emeritus of Atmospheric and Oceanic Science at the University of Colorado and Dr. Anthony Lupo, Professor in Atmospheric Science at the University of Missouri. The findings were damning: “The conclusive findings of this research are that the three GAST [global average surface temperature] datasets are not a valid representation of reality.”

The Wallace authors were interested in the numerous adjustments that are made to the surface temperature record, a habit that continues to this day. The Met Office has added 30% extra heating to the recent temperature record and cooled past records since 2013. ‘Hottest’ years ever are declared, despite the fact that the U.K. was actually colder in the 2010s than the 2000s. The global temperature pause from 1998 to around 2012 has been adjusted out of the fifth edition of HadCRUT, although it is still obvious from the satellite and balloon record. According to the satellite record, rarely mentioned in the mainstream media, the recent run of global warming started to run out of steam over 20 years ago.

Similar adjustments are seen in all three datasets; the three authors are not impressed: “In fact, the magnitude of their historical data adjustments, that removed their cyclical temperature patterns, are totally inconsistent with published and credible U.S. and other temperature data.”

Again, we refer to the words of Profs Happer and Lindzen: “Misrepresentation, exaggeration, cherry picking or outright lying pretty much covers all the so-called evidence marshalled in support of the theory of catastrophic global warming caused by fossil fuels and CO2.”

Almost every plank that supports Net Zero is provided by these surface temperature datasets, amplified by climate models. They are at the centre of the grim pronouncements made by the IPCC and loyally passed on by mainstream messengers. Time, perhaps, to look in detail at temperature datasets boosted by jet exhausts and constant upward adjustments, along with climate forecasting models that are invariably wrong, to see if the science is quite as ‘settled’ as defined by elite political opinions.

Chris Morrison is the Daily Sceptic’s Environment Editor

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.