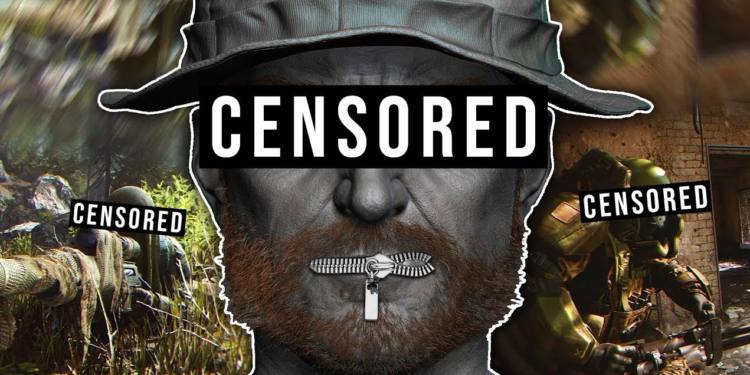

Call of Duty (CoD), a video game series published by Activision, has jumped into the murky waters of AI-powered censorship after revealing a new partnership with AI voice moderation tool Modulate ToxMod. This will be built-in to the newest CoD game, Modern Warfare 3, which will be released on November 10th this year. Currently, it is being trailed on Modern Warfare 2 and Call of Duty: Warzone. It will be used for flagging ‘foul-mouthed’ players and identifying hate speech, racial or homophobic language, misogyny and any ‘misgendering’. Players do not have the option to prevent the AI listening in.

As well as being the latest development in online censorship, this can also be seen an escalation of the war on men. The majority of CoD players are male and the game is often played after a hard day’s work, late at night after a visit to the pub and involves a great deal of banter. The AI could be very busy, given the nature of the game, which is confrontational, can be played by opposing teams and can also be played across international boundaries. One of my uncles was among the top 100 players in the world and the language emanating from his bedroom was, um, ‘choice’.

ToxMod can listen into players’ voice chats and delve into the tone and intent of the text used in chats – including its emotion, volume and prosody – by using sophisticated machine-learning models. The developers claim that their aim is to crack down on foul language and ‘toxic behaviour’. But why should players trust ToxMod’s definition of what is ‘toxic’? Many will suspect that a real motive is to impose on gamers a woke vision of an ‘inclusive’ society sanitised from all forbidden thoughts. What’s next – microphones under our seats at football stadiums?

We are, sadly, becoming used to having AI spy on us. This already happens routinely with WeChat, a platform used by huge numbers in China and also widely across the West. TikTok, a video-sharing app that allows users to create and share short-form videos on any topic — another Chinese platform — admitted to spying on U.S. users at the end of last year and this has led to discussions about the app being banned. Snapchat introduced its own AI chatbot in April this year, which knows your location – even though the app promised that it didn’t – asks personal questions and always enquires about your day.

In George Orwell’s dystopian novel 1984 – which too many these days seem to mistake for a manual – the Government used telescreens to monitor and spy on the population by distributing both information conveyors and surveillance devices in public and private areas. ToxMod has all the marks of yet another Orwellian prophecy coming to pass.

Call of Duty is a game designed for adults. In a free country, adults should reasonably expect privacy when engaging in conversations in their own homes.

And what, we should ask, is ToxMod going to do with all the data it collects on players’ ‘hate speech’ and ‘toxic behaviour’? At the moment it’s unclear, but don’t be surprised to see figures emerging in the coming months on the number of players facing bans for ‘hate speech’ as a result of their CoD conversations.

It’s hard to imagine any of this going down well with players. Will CoD find itself the latest victim of ‘go woke, go broke’ as players abandon a game that presumes to monitor their private conversations and inflict penalties on them for what it overhears?

Jack Watson, who’s 14, has a Substack newsletter called Ten Foot Tigers about being a Hull City fan. You can subscribe here.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.