In November 2021, one of the main programmers of the NASA climate model Gavin Schmidt told readers of the Spectator that the track record of models going back to the 1970s, “shows they have skilfully predicted the trends of the past decades”. Now that laughter has finally subsided, we have an expert analysis of NASA’s GISS Model E with its 441,668 lines of pre-historic (circa 1983) FORTRAN code. With water that doesn’t freeze and “negative” cloud cover, it is said that the claim the model is ‘physics-based’ is a term used in the same way that Hollywood producers say a movie is ‘based on a true story’.

The detailed examination has been written by the experienced computer programmer Willis Eschenbach and his paper Climate Models and Climate Muddles has been published by Net Zero Watch (NZW). Andrew Montford of NZW discussed the paper in a recent edition of the Daily Sceptic, noting that climate models are at the centre of the global warming scare and back all the weather alarms promoting the collectivist Net Zero project. But what if the climate models were all junk, he asked. Somewhat alarmingly, Eschenbach’s work shows “this is indeed the case”.

Eschenbach argues that the current crop of computer climate models are far from being fit to be used to decide public policy. To verify this, he says, you only need to look at the endless string of bad, failed, crashed-and-burned predictions they have produced. Pay them no attention, he cautions. “Their main use is to add false legitimacy to the unrealistic fears of the programmers.” If you write a model under the working assumption that carbon dioxide controls the temperature, then guess what you’ll get.

According to Eschenbach, climate models have a hard time replicating the amazing stability of the climate system. They are ‘iterative’ models, meaning the output of one timestep is used as the input for the next. As a result any errors are carried over, making it easy for models to spiral the Earth into fire and snow balls. NASA gets around polar water refusing to freeze and ‘negative’ amounts of cloud forming (what do minus-two clouds look like?) during model runs by replacing bad values with corresponding maximum or minimum values. “Science at its finest,” comments Eschenbach. He notes that he is not picking on just NASA. The same issues, to a greater or lesser extent, exist within all complex iterative models. “I’m simply pointing out that these are not ‘physics-based’ – they are propped up and fenced in to keep them from crashing,” he observes.

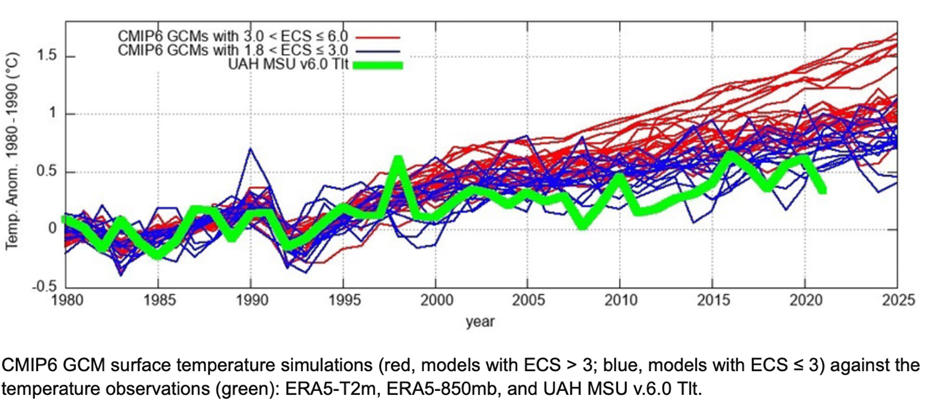

This is the graph produced by Professor Nicola Scafetta plotting 38 of the major climate models showing their temperature predictions set against the thick green line of the actual satellite record.

As can be seen, the predictions started to go haywire 25 years ago, just as the global warming fright started to gain political traction. In his Spectator article, Gavin Schmidt, a one- time ‘fact checker’ of the Daily Sceptic, noted that most outcomes depend on the overall trend and not the “fine details of any given model”. In fact the record shown above seems to back up Eschenbach’s view that all a computer model can do is “make visible and glorify the understandings and, more importantly, the misunderstandings of the programmers”.

The case against relying on computer models to back an insane global de-industrialisation campaign grows by the day. The latest nonsense, peddled by the BBC among many media outlets, is that a world’s hottest day temperature record was broken three times last week. As climate journalist Paul Homewood noted, the idea that global temperatures could shoot up by 0.22°C in just three days is physically impossible. The entire propaganda exercise is the product of computer modelling – any reader of Eschenbach’s diligent work might not be surprised to discover.

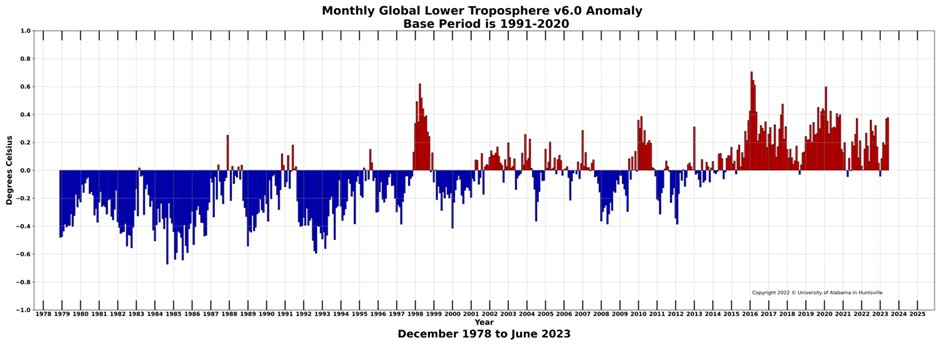

There is a great deal of excitement in alarmist circles about a new El Niño weather oscillation that is starting to brew and might come to the rescue with a little extra heat. Hence all the recent useful-idiot coverage of ‘boiling oceans’ and record heat days. Any El Niño warming will of course be entirely natural but, cynics might note, it will alleviate the need for surface datasets to make yet more upward retrospective adjustments. The dramatic effect of El Niños can be seen in the latest anomaly data from the accurate satellite temperature record.

The two high points shown in 1998 and 2016 were both very powerful El Niño years that pushed global temperatures up. If one takes the high point of 1998, a case can be made that global warming ran out of steam at this point. It is of course just one year, but it was 25 years ago and temperatures have only twice passed this peak since – in the dramatic El Niño of 2016. A small amount of warming can be discerned since, but hardly enough to justify the worldwide panic caused by manufactured climate models and heavily adjusted surface temperature data.

The widespread use of Armageddon model predictions was highlighted recently by research from Clintel. It showed that 42% of the gloomy forecasts made by the Intergovernmental Panel on Climate Change were based on climate model scenarios that even the UN body admits are of “low likelihood”. They assume temperature rises of up to 5°C within less than 80 years. Almost nobody now believes the scenarios are remotely plausible. Yet it has been shown that around half the impacts and forecasts across the entire scientific literature are based on them. It is a fair bet that almost 100% of the increasingly hysterical climate headlines found across mainstream media are corrupted by these fantastical notions.

Chris Morrison is the Daily Sceptic’s Environment Editor.

To join in with the discussion please make a donation to The Daily Sceptic.

Profanity and abuse will be removed and may lead to a permanent ban.