Rod Driver’s recent piece was interesting but perhaps in black and white rather than shades of grey. There is, to be sure, overenthusiasm for new drugs, and occasionally concealment of risk, but the situation is more nuanced than he sets out.

In my medical career of nearly 50 years, 28 as a consultant rheumatologist, I have taken a great deal of notice of drugs – which is hardly surprising, as I have used many. As a trainee I was fascinated by the sharp dissection of a series of trials of anti-inflammatory drugs made by one of my friends. He outlined a long series of faults, ranging from inappropriate comparators, poor trial construction, inappropriate dosing schedules and use of the wrong statistical analytical tools. In my book “Mad Medicine” I have described some of these, with a specific personal episode illustrating how data can be ignored.

In 1982 I went to a company symposium abroad launching a new drug; this was not the ill-famed Orient Express trip, but much gin was tasted, as the meeting was in Amsterdam. This may not be quite as bad as it looks, because it was cheaper to fly all the British rheumatologists to Amsterdam than it was to fly the much smaller number of worldwide experts to London. The pharmacodynamics of the drug had been tested on, I think, eight normal subjects. Seven were very similar in terms of the plasma half-life. The eighth was quite different. In the presentation the pharmacologist blithely told the audience that the outlier had been ignored in the analysis of the data. I got up and asked how it was scientific to exclude over 12% of the sample; perhaps the wayward subject had some genetic difference that meant he metabolised the drug differently. He couldn’t possibly assume that this one was unique and, if they had done another hundred tests, who was to know whether another 11 subjects would have produced the same result? Much muttering and harrumphing went on. I ignored the rest of the programme and went sightseeing. You cannot analyse only the data that fit your model, and similar selective data manipulation has been exposed in other large-scale trials. I am a fan of Ben Goldacre’s book “Bad Pharma”, but it’s not just the industry that’s to blame; I wonder when the clinicians who misuse data on statins (and are often funded by the industry, so have a conflict of interest) will finally be brought to book. But to damn the pharmaceutical industry as a whole is risky. The baby may well go out with the bathwater.

Rod asks why we don’t learn the lessons of preliminary data being overtaken by new evidence, so that we realise drugs may do more harm than good. Actually this is a matter of scale. Drug trials have many phases; animal testing (though animals may develop side-effects that humans do not, and vice-versa); pharmacodynamic studies that look at how a drug may be metabolised, as above; trials on volunteers; small-scale trials on patients; and finally larger scale trials. But if a drug has, say, a serious side-effect that occurs in one in 100,000 a standard trial will not attribute it. If you get 200 people who develop it, such that you might become suspicious, you will have exhibited it in 20 million patients. You cannot do a trial that big, so serious but rare side-effects will only come out in the wash much later.

Furthermore, the initial trials may have used the wrong patient cohort. Thus benoxaprofen, known as Opren, was never tested in the over-65s, which is where in the end the serious renal side-effects appeared because, surprise, older people metabolised the drug more slowly, so it accumulated. Phocomelia is a rare phenomenon, and appears at random, but it required many pregnant women to have had thalidomide, and then (as it was pre-internet) several unconnected case reports, to attribute effect to drug. If you weren’t pregnant then obviously that effect did not appear. The use of radiotherapy in the 1950s to treat a spinal condition, ankylosing spondylitis, was found to provoke leukaemia, but this did not appear immediately. In fact, a re-analysis I did suggested that many of those who developed it may well have been treated too late and with the wrong diagnosis. Thalidomide would have been abandoned anyway, because it produced significant peripheral nerve damage, and radiotherapy caused multiple skin cancers in the X-ray field which would have proved enough to finish radiotherapy as a treatment. But if something turns up that is unexpected then the only way you find it is, if you like, to suck it and see. And you may have to do much sucking to establish a causal relationship when the side-effect is rare.

We do learn from the lessons. After thalidomide and benoxaprofen, among others, drug trial regimes were tightened up; patient cohorts were changed; statistical analysis was improved. But now and again something will slip through for reasons only understood by using the infallible instrument of the retrospectoscope – the volunteer trial of TGN-1412 is a case in point. There were errors in conducting the trial, not least that all the subjects had the drug administered together, but no-one could have predicted the side-effect (multiple organ failure). However, once the details were known, anyone who had seen that constellation of symptoms and signs – as I had once – could have been in no doubt why it happened. And so subsequent trials were adjusted to avoid the risk.

Next – patents. There is no doubt that patents are a form of protectionism, but their existence is protean and I cannot see any reason for any sector of manufacturing industry not to use them, unless they are altruistic fools. The cost of developing new drugs is immense and while trials may be conducted by hospitals and university departments it’s the industry, by and large, that funds them. People do not realise that companies must take into account both the development costs and manufacturing costs. The development costs are skewed because a large number of compounds never reach the market because something goes wrong; they may not work, they may work no better than existing drugs, they produce early side-effects and so on. All these costs have to be written into the price of the drugs that get through all the hoops and reach the marketplace. And the manufacturing costs for some of the immune therapies are huge. Would you, as an entrepreneur, be happy to spend millions in development and initial production for some other company then to pirate your hard work? And anyway, patents expire eventually. People imagine that then the drug cost falls like a stone. Actually often it does not. There are many examples of drugs no longer made by their originators because it’s not worth their while. A series of generic manufacturers jump aboard, and then discover that it’s not worth their while either; maybe the profit is inadequate or the market too small. You then get left with a single generic maker who hikes the price. They may get their comeuppance, but there have been numerous examples of one monopoly replacing another.

Do companies try to charge as much as they can get away with? I am sure they do sometimes, but I was involved with the introduction of a new biologic agent for inflammatory arthritis which was priced well above its competitors (it had a different mode of action, so was not a ‘me-too’ drug). I pointed out that this created a financial disincentive to prescribing, and notice was taken.

So I think that drug companies are entitled to protect their investment, not least because their ‘profit’ covers the cost of the myriad preparations that don’t make it.

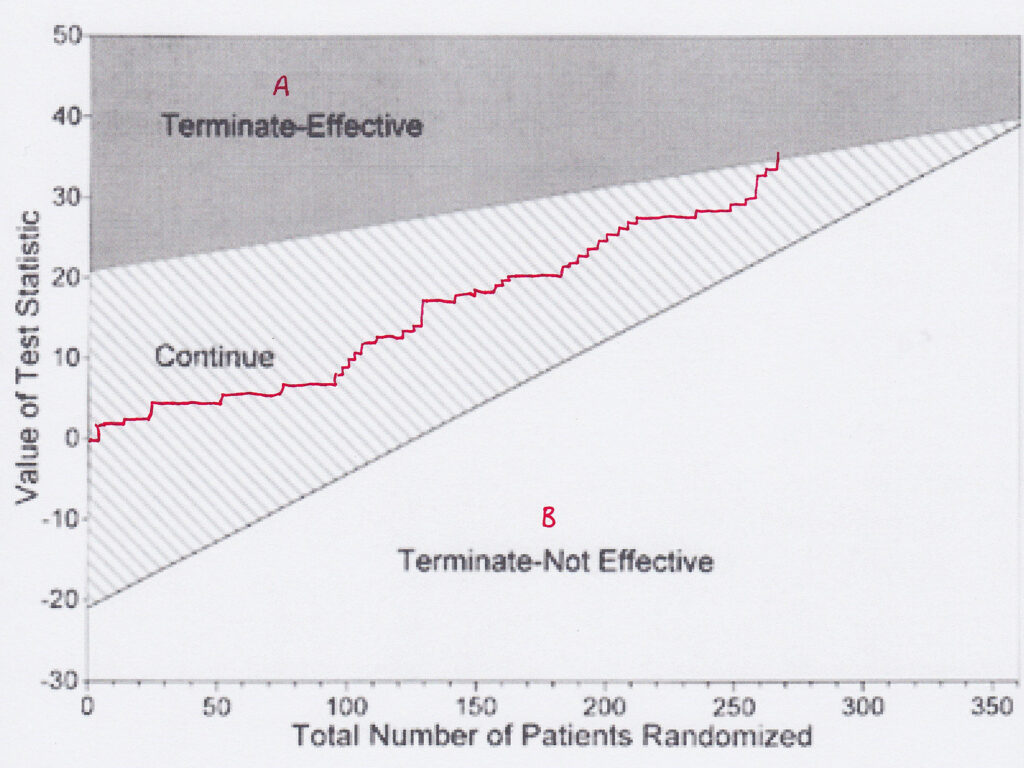

I agree that many new drugs are no better than those they replace. That they do succeed may be down to marketing and disinformation. If I read that a trial has shown that new drug B is ‘at least as good as’ old drug A, I want to know what evidence there is. Often the fault is in a misunderstanding of comparative sequential trials. In these, a plot of each patient is made on a graph depending on whether drug A is better than drug B, or vice-versa. A plot enables one to tell, and you can change the confidence limits, when one is better than the other. However, if the graph line continues and fails to show a difference, it doesn’t mean there is no difference, merely that you have failed to prove that there is a difference. The two are not the same. Many such trials are interpreted, however, as showing no difference.

Yes, huge amounts of money are spent on drugs that are useless, such as Avandia and Tamiflu. There is no excuse for withholding data (hello, I hear statins again). But such developments underpin my argument that successful drugs must finance the failures – and there’s no way of knowing which will be which when you start.

Conflict of interest alert. I have been to conferences that were heavily supported by drug companies, and even been paid to go. Companies were particularly keen to finance British rheumatologists’ trips to the American College of Rheumatology conferences; I never went to one of them. But is it necessarily a Bad Thing? Am I really being bought? Certainly in my specialty there were so many alternatives to choose from that one could only be accused of being bought by the whole lot. Did I go to conferences to learn about new drugs? A bit, but I was mindful of all the trial pitfalls on show, and so carried large quantities of pinches of salt. No, I went to meet my colleagues and learn from their experience, not only of drugs but of management issues, clinical problems and other important but drug-unrelated things, and learn about real and significant scientific breakthroughs. Even in science, things are not black and white; one line of investigation into a viral origin for rheumatoid arthritis occupied several departments for some years, but was eventually found to be false thanks to a contaminated assay. But if the conferences were not supported by the drug industry, many would in all probability not happen, which could be a disaster for medicine. I have no doubt that there are egregious attempts at bribery, but one has to look at the pros as well as the cons.

I once gave a talk to a group of general practitioners about non-steroidal anti-inflammatory drugs. It was in the evening, and a drug rep supplied food. I told them not to use his drug; there were others that were older and thus better-researched. The drug was Vioxx. This was before the scandal of data concealment. My opinion was based on scientific evidence (and lack of it). Had the food been missing I would not have had an audience. So inducements may have their benefits.

I can only agree with Rod that negative evidence is suppressed. It should be a requirement that all registered trials are published, positive, negative or abandoned. And like must be compared with like. Statins again. The benefit of many is suggested to be a 50% reduction in heart attacks. Sounds great, but 50% of what? If the absolute risk of a heart attack is 5%, then a 50% reduction takes it down to 2.5%. Not so great. Meanwhile the side-effects are always given as absolute risks, so benefit is overstated in relation to risk. In addition, attempts to access the source data of some research has been impossible, so one cannot judge the veracity of the conclusions. The excuse given is commercial sensitivity or confidentiality. Not a good enough excuse. This should be something properly investigated. There is accumulating evidence that the (small) effect of statins is not related to their cholesterol-lowering properties, and the whole basis of the cholesterol-heart hypothesis is based on selective data-picking. Malcolm Kendrick and Uffe Ravnskov are two authors who have dissected this in detail. The astronomical cost of statins warrants a closer look. Well, you may think, they are actually cheap pills, but multiplying a low cost by a large number comes out worse than multiplying a large cost by a small one.

I disagree with Rod that nationalisation of the industry is the answer; it would be rapidly bogged down in bureaucracy, and thus unworkable. So let’s stick to something easy. All drug trials should be registered (already coming in the U.K.), all registered drug trials should be published, and all trials should have their source data open to independent review, and all trial authors should append truthful conflict of interest statements. Not only that, but the statistical methods of each trial should be transparent. The possibilities of inappropriate analysis, hiding of unwanted facts and outright fraud and deception would be dramatically reduced by these simple measures. Suck it and see?

Dr. Andrew Bamji is Gillies Archivist to the British Association of Plastic, Reconstructive and Aesthetic Surgeons. A retired consultant in rheumatology and rehabilitation, he was President of the British Society for Rheumatology from 2006-8.

Profanity and abuse will be removed and may lead to a permanent ban.