This is a guest post by contributing editor Mike Hearn.

Last August, a cluster of fake scientific papers appeared in the journal Personal and Ubiquitous Computing. Each paper now carries a notice saying that it’s been been retracted “because the content of this article is nonsensical”.

This cluster appears to be created by the same group or person whom Daily Sceptic readers previously encountered in October. The papers are scientific-sounding gibberish spliced together with something about sports, hobbies or local economic development:

- “The conversion of traditional arts and crafts to modern art design under the background of 5G mobile communication network”, Linlin Niu

- “The application of twin network target tracking and support tensor machine in the evaluation of orienteering teaching”, Shenrong Wei

- “Application of heterogeneous execution body scheduling and VR technology for mimic cloud services in urban landscape design”, Liyuan Zhao

- “Application of deep and robust resource allocation for uncertain CSI in English distance teaching”, Li Junsheng

Therefore, the combination of LDPC and Polar codes has become the mainstream direction of the two technologies in the 5G scenario. This has further inspired and prompted a large number of researchers and scholars to start exploring and researching the two. In the development of Chinese modern art design culture, traditional art design culture is an important content…

Linlin Niu

This sudden lurch from 5G to Chinese modern art is the sort of text that cannot have been written by humans. Other clues are how the titles are obviously templated (“Application of A and B for X in Y”), how the citations are all on computing or electronics related subjects even when they appear in parts of the text related to Chinese art and packaging design, and of course the combination of extremely precise technical terms inserted into uselessly vague and ungrammatical statements about “the mainstream direction” of technology and how it’s “inspired and prompted” researchers.

An explanation surfaces when examining the affiliations of the authors. Linlin Niu (if she exists at all) is affiliated with the Academy of Arts, Zhengzhou Business University. Shenrong Wei works at the Department of Physical Education, Chang’an University. Li Junsheng is from the School of Foreign Studies, Weinan Normal University. Although the journal is a Western journal with Western editors, all the authors are Chinese, the non-computer related text is often to do with local Chinese issues and they are affiliated with departments you wouldn’t necessarily expect to be publishing in a foreign language.

The papers themselves appear to have been generated by a relatively sophisticated algorithm that’s got a large library of template paragraphs, terms, automated diagram and table generators and so on. At first I thought the program must be buggy to generate such sudden topic switches, but in reality it appears to be designed to create papers on two different topics at once. The first part is on whatever topic the targeted journal is about, and is designed to pass cursory inspection by editors. The second part is related to whatever the professor buying the paper actually “studies”. Once published the author can point colleagues to a paper published in a prestigious Western journal and perhaps cite it themselves in more normal papers, as ‘evidence’ of the relevance of whatever they’re doing to the high-tech world.

Prior events

If you’re a long-time reader of the Daily Sceptic, computer-generated gibberish being presented as peer-reviewed science won’t come as a surprise because this has happened several times before. Last year Springer had to retract 463 papers, but the problem isn’t restricted to one publisher. In July it was discovered that Elsevier had published a stream of papers in the journal Microprocessors and microsystems that were using nonsensical phrases generated from a thesaurus, e.g. automatically replacing the term artificial intelligence with “counterfeit consciousness”. This was not a unique event either, merely the first time the problem was noticed – searching Google Scholar for “counterfeit consciousness” returns hundreds of results spanning the last decade.

Computer-generated text is itself only the most extreme form of fake scientific paper. A remarkable number of medical research papers appear to contain Photoshopped images, and may well be reporting on experiments that never happened. Fake drug trials are even more concerning yet apparently prevalent, with a former editor of the BMJ asserting that the problem has become large-scale enough that it may be time to assume drug trials are fraudulent unless it can be proven otherwise. And of course, this is on top of the problem of claims by researchers that are nonsensical for methodological, statistical or logical reasons, which we encounter frequently when reviewing (especially) the Covid literature.

Zombie journals

Each time this happens, we take the opportunity to analyse the problem from a new angle. Last time we observed that journals appear to be increasingly automated, with the ‘fixes’ publishers propose for this problem being a form of automated spam filtering.

But why don’t these papers get caught by human editors? Scientific publishers like Springer and Elsevier appear to tolerate zombie journals: publications that look superficially real but which are in fact brain dead. They’re not being read by anyone, not even by their own editors, and where meaningful language should be there’s only rambling nonsense. The last round of papers published by this tech+sports group in the Arabian Journal of Geosciences lasted months before anyone noticed, strongly implying that the journal doesn’t have any readers at all. Instead they have become write-only media that exist purely so academics can publish things.

Publishers go to great lengths to imply otherwise. This particular journal’s Editor-in-Chief is Professor Peter Thomas, an academic with his own Wikipedia page. He’s currently affiliated with the “Manifesto Group”. Ironically, the content on the website for Manifesto Group consists exclusively of the following quote from a famous advertising executive:

People won’t listen to you if you’re not interesting, and you won’t be interesting unless you say things imaginatively, originally, freshly.

Bill Bernbach

This quote looks a bit odd given the flood of auto-generated ‘original’ papers Professor Thomas’s journal has signed-off on.

Clearly, he has never read the retracted articles given he later stated they were nonsensical. Instead, he blamed the volunteer peer reviewers for not complying with policy. And yet he isn’t on his own in editing this journal: the website lists a staggering 14 editors and 30 members of its international editorial board, which leads to the question of how not just one guy failed to notice they were signing off on garbage but all 45 of them failed to notice. For posterity, here are the people claiming to be editors yet who don’t appear to be reading the articles they publish:

Editors

Emilia Barakova, Eindhoven University of Technology, The Netherlands

Email: e.i.barakova@tue.nl

Alan Chamberlain, University of Nottingham, UK

Email: alan.chamberlain@nottingham.ac.uk

Mark Dunlop, University of Strathclyde, UK

Email: mark.dunlop@strath.ac.uk

Bin Guo, Northwestern Polytechnical University, China

Email: guobin.keio@gmail.com

Matt Jones, Swansea University, UK

Email: matt.jones@swansea.ac.uk

Eija Kaasinen, VTT, Finland

Email: eija.kaasinen@vtt.fi

Jofish Kaye, Mozilla, USA

Email: puc@jofish.com

Bo Li, Yunnan University, China

Email: boliphd@outlook.com

Robert D. Macredie, Brunel University, UK

Email: robert.macredie@brunel.ac.uk

Gabriela Marcu, University of Michigan, USA

Email: gmarcu@umich.edu

Yunchuan Sun, Beijing Normal University, China

Email: yunch@bnu.edu.cn

Alexandra Weilenmann, University of Gothenburg, Sweden

Email: weila@ituniv.se

Mikael Wiberg, Umea University, Sweden

Email: mwiberg@informatik.umu.se

Zhiwen Yu, Northwestern Polytechnical University, China

Email: zhiwenyu@nwpu.edu.cnInternational Editorial Board

Bert Arnrich, Bogazici University, Turkey

Tilde Bekker, Eindhoven University of Technology, The Netherlands

Victoria Bellotti, Palo Alto Research Center, USA

Rongfang Bie, Beijing Normal University, China

Mark Billinghurst, University of South Australia, Australia

José Bravo, University of Castilla-La Mancha, Spain

Luca Chittaro, HCI Lab, University of Udine, Italy

Paul Dourish, University of California, USA

Damianos Gavalas, University of the Aegean, Greece

Gheorghita Ghinea, Brunel University, UK

Karamjit S. Gill, University of Brighton, UK

Gillian Hayes, UC Irvine, USA

Kostas Karpouzis, National Technical University of Athens, Greece

James Katz, Boston University, USA

Rich Ling, Nanyang Technological University, Singapore

Patti Maes, MIT Media Laboratory, USA

Tom Martin, Virginia Tech, USA

Friedemann Mattern, ETH Zurich, Switzerland

John McCarthy, University College Cork, Ireland

José M. Noguera, University of Jaen, Spain

Jong Hyuk Park, Seoul National University of Science and Technology (SeoulTech), Korea

Francesco Piccialli, University of Naples “Federico II”, Italy

Reza Rawassizadeh, Dartmouth College, USA

Enrico Rukzio, Ulm University, Germany

Boon-Chong Seet, Auckland University of Technology, New Zealand

Elhadi M. Shakshuki, Acadia University, Canada

Phil Stenton, BBC R&D, UK

Chia-Wen Tsai, Ming Chuan University, Taiwan

Jean Vanderdonckt, LSM, Université catholique de Louvain, Belgium

Bieke Zaman, Meaningful Interactions Lab (Mintlab), KU Leuven, Belgium

What are academic outsiders meant to think when faced with such a large list of people yet also evidence that none of them appear to be reading their own journal? Does editorship of a scientific journal mean anything, or is this just like the Chinese papers – another way to pad resumés with fake work?

We may be tempted for a moment to think that as the papers were after all retracted, perhaps at least some of them are checking? But putting aside for a moment that an editor is meant to do editing work before publishing, from a quick flick through their most recent papers, we can see that nonsensical and irrelevant work is still getting through. For example:

- “Analysis of investment effect in Xinjiang from the perspective of nontraditional security“, which has nothing to do with personal computing. Instead it’s about China’s efforts to crack down on the ethnic Muslim population in Xinjiang. Abstract: “Terrorism poses a huge threat to economic development. Based on the data of terrorist activities provided by the Global Terrorism Database, this paper analyzes the temporal and spatial characteristics of terrorist activities in Xinjiang”. Amusingly, one of the author’s affiliations is literally stated as the “Research Center On Fictitious Economy and Data Science”.

- “Synergic deep learning model-based automated detection and classification of brain intracranial hemorrhage images in wearable networks“, which briefly mentions “wearable technology products” (e.g. Apple Watch) before going on to talk about automatically classifying CT scans in hospitals. The latter has nothing to do with the former.

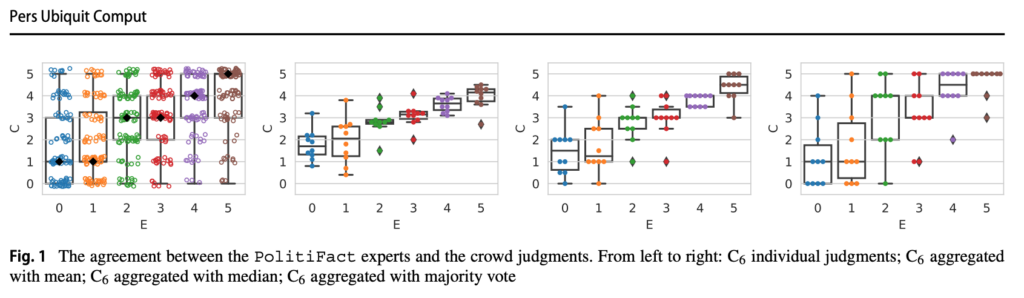

Perhaps inevitably, this journal has also published “Can the crowd judge truthfulness? A longitudinal study on recent misinformation about COVID-19“. This is one of a series of studies that asks pay-per-task workers on Amazon Mechanical Turk (defined as “the crowd”) to answer questions about Covid and U.S. politics. Their answers are then compared to the “ground truth” of “expert judgements” found on … Politifact (see chart below). As well as being irrelevant to the journal, this exemplar of good science had a sample size of just 10 survey takers despite requiring a team of nine academics and support “by a Facebook Research award, by the Australian Research Council, by a MIT International Science and Technology Initiatives Seed Fund and by the project HEaD – Higher Education and Development – (Region Friuli – Venezia Giulia)”. The worthlessness of their effort is, naturally, used as a justification for doing it again in another paper – “having a single statement evaluated by 10 distinct workers only does not guarantee a strong statistical power, and thus an experiment with a larger worker sample might be required to draw definitive conclusions”.

Root causes

The goal of a scientific journal is, theoretically, to communicate new scientific discoveries. The goal of peer review is to create quality through having people (and especially competitors) carefully check a piece of work. And rightly so – many fields rely heavily on peer review of various kinds, including my own field of software engineering. We do it because it finds lots of mistakes.

Given these goals, why does Springer tolerate the existence of journals within their fold that repeatedly publish auto-generated or irrelevant articles? Put simply, because they can. Journals like these aren’t really read by normal people looking for knowledge. Their customers are universities that need a way to define what success means in a planned reputation economy. Their function has been changing – no longer communication but, rather, being a source of artificial scarcity useful for establishing substitute price-like signals such as h-indexes and impact factors, which serve to bolster the reputations and credentials of academics and institutions.

This need can result in absurd outcomes. After a long campaign to make publishing open access (i.e., to allow taxpayers to read the articles they’ve already funded) – to its credit largely by scientists themselves – even that giant of science publishing Nature has announced that it will finally allow open access to its articles. The catch? Scientists have to pay them $10,000 for the privilege of their own work being made available as a PDF download. In the upside down world of science, publishers can demand scientists pay them for doing little beyond picking the coolest sounding claims. The actual review of the article will still be done by volunteer peer reviewers. Yet some of them will pay because the money comes from the public via Government funding of research, and because being published in Nature is a career-defining highlight. In the absence of genuine market economics tying research to utility, how else are they meant to rank themselves?

Meanwhile, peer review seems to have become a mere rubber stamp. The label can well mean a genuinely serious and critical review by professionals was done, but it might also mean nothing at all, and it’s not obvious how anyone is meant to quickly determine which it is. The steady decay of science into random claim generation is the inevitable consequence.

The author wishes to thank Will Jones and MTF for their careful peer review.

Mike Hearn is a former Google software engineer and author of this code review of Neil Ferguson’s pandemic modelling.

Profanity and abuse will be removed and may lead to a permanent ban.